Top 7 NLP Tools for Prior Art Detection

Intellectual Property Management

Apr 7, 2026

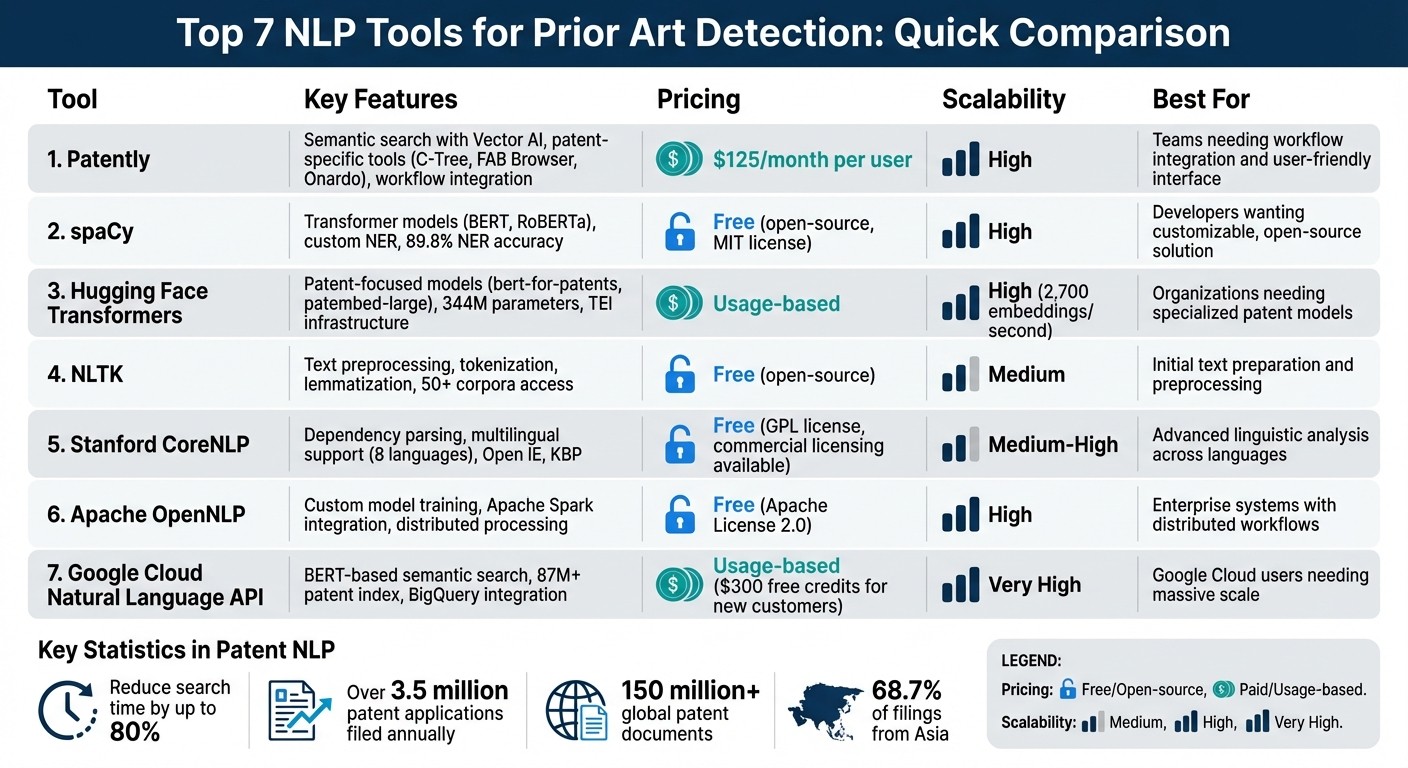

NLP tools dramatically speed prior-art searches by using semantic search, machine translation, and scalable pipelines for patent analysis.

Finding prior art for patents is challenging due to varying terminology and the sheer volume of filings - over 3.5 million annually. Natural Language Processing (NLP) tools simplify this process by using semantic search to identify conceptually similar inventions, even when language differs. These tools reduce search time by up to 80%, handle global databases with machine translation, and integrate with workflows for efficiency. Below are the top 7 NLP tools for prior art detection:

Patently: Offers semantic search with Vector AI, patent-specific tools, and seamless workflow integration. Plans start at $125/month.

spaCy: Open-source library with transformer models like BERT, ideal for semantic search and custom patent analysis.

Hugging Face Transformers: Provides specialized models like

bert-for-patentsand scalable infrastructure for large datasets.NLTK: A Python library for text preprocessing, including tokenization and lemmatization, suitable for initial patent text preparation.

Stanford CoreNLP: Java-based toolkit with advanced linguistic analysis, supporting dependency parsing and multilingual capabilities.

Apache OpenNLP: Machine learning toolkit for custom patent workflows, compatible with distributed systems like Apache Spark.

Google Cloud Natural Language API: Uses BERT for semantic search, integrates with Google Cloud tools, and scales for large datasets.

Quick Comparison

Tool | Key Features | Pricing | Scalability | Strengths |

|---|---|---|---|---|

Patently | Semantic search, patent-specific tools | $125/month+ | High | Workflow integration, user-friendly |

spaCy | Transformer models, custom NER | Free (open-source) | High | Open-source, customizable |

Hugging Face | Patent-focused models, TEI | Usage-based | High | Specialized models, scalability |

NLTK | Preprocessing tools | Free (open-source) | Medium | Text preparation |

Stanford CoreNLP | Linguistic analysis, multilingual | Free (GPL license) | Medium-High | Advanced parsing |

Apache OpenNLP | Custom training, distributed systems | Free (Apache License) | High | Integration with enterprise systems |

Google Cloud NLP API | BERT-based semantic search | Usage-based | Very High | Google Cloud integration |

These tools cater to different needs, from basic text analysis to advanced semantic search, and are transforming how patent professionals detect prior art. Choose based on your workflow, dataset size, and budget.

NLP Tools for Prior Art Detection: Feature and Pricing Comparison

1. Patently

Semantic Search Capabilities

Patently's Vector AI technology does more than just match keywords - it understands the context behind patent claims. This means it can identify relevant prior art even when technical language differs. For patent professionals dealing with inventions described in varying terms across jurisdictions, this feature is a game-changer, ensuring critical references aren't overlooked.

Tools for Patent-Specific Text Analysis

Patently comes equipped with tools designed specifically for patent documents. Features like C-Tree, which visually maps patent families, and FAB Browser, which traces citations, make navigating complex data easier. Additionally, Onardo offers generative AI patent drafting tools, integrating prior art findings directly into new applications. For those needing quick insights, the automated claim charting tool maps patents against technical documentation in just minutes.

Seamless Integration with Patent Workflows

Patently isn't just about advanced search - it fits right into existing patent workflows. With hierarchical project categories, users can organize research by department, profit center, or legal matter, making it easy to track multiple cases. Team collaboration is simplified with customizable access controls, and export options allow data to be pulled into other systems your firm relies on.

Flexible for Large Datasets

Whether you're conducting a single search or managing extensive enterprise operations, including AI-enabled patent analysis for landscapes, Patently scales to meet your needs. Pricing starts with a free plan for limited individual use, moves to a Starter plan at $125 per month per user for teams of up to 10, and offers tailored Enterprise solutions for larger organizations. Law firms managing client portfolios can benefit from the Law Firm+ plan, which focuses on matter-centric management to keep client work organized and accessible. Together, these features make Patently an indispensable tool for modern patent research.

Invention Prior Art Search for Patents with AI

2. spaCy

spaCy is a standout tool in the NLP landscape, offering advanced features tailored to semantic analysis and patent-focused applications.

Semantic Search Capabilities

With its pretrained word vectors and transformers like BERT and RoBERTa, spaCy enables efficient semantic search. It reduces inflected forms of words (e.g., "organizing" and "organized" become "organize"), ensuring that grammatical variations are treated as a single concept. This is particularly helpful when navigating international patents, where linguistic differences often arise.

The en_core_web_trf transformer pipeline achieves an impressive 89.8% accuracy for Named Entity Recognition (NER) on the OntoNotes 5.0 corpus. This makes it a reliable tool for identifying technical entities in patent texts. Additionally, its dependency parsing feature helps break down complex patent claims by analyzing grammatical relationships, a critical step for interpreting dense legal language.

Tools for Patent-Focused Text Analysis

spaCy's PhraseMatcher is highly effective for identifying and labeling technical terms within patent disclosures. By using predefined terminology lists, it simplifies the task of pinpointing key entities. Users can also train custom NER models on patent-specific datasets, such as the USPTO's International Patent Classification, and refine them using Active Learning tools like Prodigy.

"The trained NER model will learn to label entities not only from the pre-labelled training data. It will learn to find and recognise entities also depending on the given context." - Nikita Kiselov, Machine Learning Engineer

The spacy-llm package integrates Large Language Models into spaCy's structured NLP workflows. This allows for rapid prototyping of tools for analyzing prior art, even without extensive training datasets. Moreover, since patent texts are generally copyright-free according to the USPTO, they serve as an ideal dataset for training spaCy models for commercial applications.

Scalability for Large Datasets

spaCy is built to handle large-scale NLP tasks efficiently. Written in Cython with optimized memory management, it processes enormous datasets - like entire web dumps - at high speed. This capability is essential when working with the global patent corpus, which contains over 150 million documents.

"If your application needs to process entire web dumps, spaCy is the library you want to be using." - spaCy.io

With support for over 75 languages, spaCy offers flexibility through multiple trained pipelines, allowing users to balance transformer-based accuracy with CPU-efficient performance. Its "project system" streamlines end-to-end workflows, covering everything from data preprocessing to training, making it production-ready for automation tasks. And as an open-source library under the MIT license, spaCy provides enterprise-grade functionality without licensing fees.

These features position spaCy as a critical tool for modern NLP applications, particularly in workflows related to prior art detection and patent analysis.

3. Hugging Face Transformers

Hugging Face Transformers, one of the top patent tools for IP professionals, simplifies the analysis of complex patent texts by converting them into high-dimensional vector embeddings. This approach enables semantic searches that go beyond exact keyword matches, identifying conceptually similar prior art even when different terminology is used. For instance, it can link terms like "fastening mechanism" with "clip assembly" by recognizing the underlying technical concepts.

Semantic Search Capabilities

The platform offers specialized models, such as bert-for-patents and patembed-large, designed specifically for the technical and legal language found in intellectual property documents. The patembed-large model, for example, is a 24-layer transformer with 344 million parameters, capable of generating 1,024-dimensional embeddings. Fine-tuning these models with expert-curated patent data enhances their precision, making them highly effective for production use.

Hugging Face’s semantic tools have also proven their value in real-world applications. In June 2024, AI firm XLSCOUT partnered with Hugging Face’s Expert Support Program to develop ParaEmbed 2.0. By fine-tuning open-source models like BGE-base-v1.5 and Llama 2 on high-quality patent data from multiple domains, they achieved a 23% accuracy improvement over their earlier version. XLSCOUT also upgraded their infrastructure, moving from a custom TorchServe setup to Hugging Face Inference Endpoints with Text Embedding Inference (TEI). This change boosted their production throughput from 300 embeddings per second to an impressive 2,700 embeddings per second - a ninefold improvement.

"This partnership combines the unparalleled capabilities of Hugging Face's open-source models, tools, and team with our deep expertise in patents. By fine-tuning these models with our proprietary data, we are poised to revolutionize how patents are drafted, analyzed, and licensed." - Sandeep Agarwal, CEO, XLSCOUT

Scalability for Large Datasets

Hugging Face Transformers supports seamless interoperability across frameworks like PyTorch, TensorFlow, and JAX, enabling models to be trained in one framework and deployed in another. For production, models can be exported to optimized formats such as ONNX or TorchScript, ensuring high performance when processing massive global patent datasets - spanning over 170 million publications. The Text Embedding Inference (TEI) container further enhances scalability, offering built-in load balancing and high-throughput capabilities crucial for large-scale prior art searches.

4. NLTK

NLTK is a Python library designed to handle essential text-processing tasks like tokenization, lemmatization, and part-of-speech tagging. These tools are particularly useful for preparing unstructured patent texts for deeper analysis, such as drafting patent applications.

Tools for Patent-Specific Text Analysis

NLTK also caters to patent-specific needs. It connects to over 50 corpora and lexical resources, including WordNet, which allows for semantic reasoning - an asset in patent analysis. With features like Named Entity Recognition (NER) and part-of-speech tagging, NLTK helps identify and classify crucial elements in patent documents. This includes pinpointing inventors, companies, and technologies while clarifying the grammatical roles of technical and legal terms. Additionally, its WordNetLemmatizer simplifies words to their base forms, ensuring consistency across variations like "blending" and "blend." This is especially important for matching prior art references.

Handling Large Patent Datasets

While NLTK is great for preprocessing, it struggles with scalability when dealing with massive patent datasets. For context, the global patent corpus exceeds 150 million documents, and a single patent description often contains over 11,000 tokens - far beyond the context limits of many standard NLP models. To handle such demands, NLTK includes wrappers for faster NLP libraries, making it a solid choice for initial text preparation. This step is particularly helpful before using advanced semantic search tools to detect prior art. The unique nature of patent language, often filled with newly created or self-defined terms, further emphasizes the need for precision when maintaining consistent terminology across these extensive documents.

5. Stanford CoreNLP

Stanford CoreNLP is a Java-based toolkit designed to deliver in-depth linguistic analysis, making it especially useful for working with patent texts. The Stanford NLP Group describes it as, "CoreNLP is your one stop shop for natural language processing in Java!". Its features are particularly handy for untangling the complex technical relationships often found in patent claims.

Semantic Search Capabilities

One of CoreNLP's standout features is its pipeline of annotators, which allows for advanced semantic analysis. It includes tools like Open Information Extraction (Open IE) and Knowledge Base Population (KBP), which extract linguistic relationships and triples from raw text. This enables search engines to match technical concepts instead of relying solely on keyword matching. Additionally, dependency and constituency parsing help uncover structural relationships within patent claims. The results can be exported in formats like JSON, XML, or CoNLL, making it easy to integrate with semantic search databases or other patent analysis tools.

Support for Patent-Specific Text Analysis

CoreNLP addresses the unique challenges of analyzing patent language with several specialized features. For instance, it can normalize numeric quantities, dates, and times, which is critical for identifying technical parameters and priority dates in prior art documents. Its SUTime component provides rule-based temporal tagging, which is particularly useful for handling filing and priority dates. The RegexNER tool allows users to define custom regular expressions for tagging domain-specific technical terms. CoreNLP also supports eight languages, including English, Chinese, German, and French, making it a versatile tool for conducting prior art searches across various international patent systems.

Integration with Patent Workflows

CoreNLP integrates seamlessly into patent workflows through its native Java API, command-line interface, or web service, which processes HTTP/JSON requests. It can also be accessed using Python, JavaScript, Ruby, and .NET via third-party APIs or the official Stanza Python library. For workflows requiring high throughput, running CoreNLP in server mode can eliminate the need to reload models for every request, saving time. Users can further optimize processing speed by customizing the annotation pipeline to include only the necessary annotators, such as tokenize, pos, and lemma. CoreNLP is licensed under the GNU General Public License v3 or later, with commercial licensing options available through Stanford University for proprietary software.

Scalability for Large Datasets

When processing large patent datasets, efficient memory management becomes crucial. On a 64-bit machine, CoreNLP typically needs 2 GB of RAM, but more complex annotators may require up to 6 GB. For memory-intensive tasks like statistical coreference resolution, allocating at least 6 GB of RAM (using the -Xmx6g flag) is recommended to avoid performance bottlenecks. To handle large datasets effectively, use the -fileList flag for batch processing, which reduces the overhead of loading models - this process alone can take about a minute during startup. Additionally, the ProtobufAnnotationSerializer provides an efficient, lossless way to store and share annotated data, making it ideal for large-scale applications.

Stanford CoreNLP is a powerful tool for tackling the challenges of patent analysis, offering features that cater to both detailed linguistic analysis and large-scale processing needs. Its flexibility and scalability make it a key resource for efficient prior art detection.

6. Apache OpenNLP

Apache OpenNLP is a machine learning toolkit built in Java, designed to handle natural language processing tasks. Its flexibility makes it a strong candidate for patent workflows, especially when integrated with Java-based enterprise systems. However, it requires custom training on patent-specific datasets to deliver optimal results, which allows it to adapt to the unique demands of patent analysis.

Semantic Search Capabilities

OpenNLP's architecture supports advanced semantic search tailored for patent analysis. Tools like the Document Categorizer and Entity Linker help classify documents into specific categories and link entities to knowledge bases. By incorporating ONNX Runtime, users can integrate modern transformer models for enhanced performance.

For example, the Document Categorizer can assign IPC (International Patent Classification) codes to patents, which helps narrow down prior art searches before diving deeper. Additionally, the Named Entity Recognition (NER) feature can be trained to identify technical terms, chemical names, or legal entities within patent claims, provided it has access to annotated training data.

Support for Patent-Specific Text Analysis

To handle the complex language of patents, OpenNLP requires custom model training. Its TokenNameFinderTrainer enables users to train models on annotated patent datasets, such as those from the USPTO or EPO. The toolkit also includes a Morfologik addon for dictionary-based lemmatization, which helps standardize technical terms across documents.

Another useful feature is the NumberCharSequenceNormalizer, which cleans up dense patent text by standardizing numerical data, dates, and citations. This normalization ensures consistency, making it easier to process and analyze patent documents.

Integration with Patent Workflows

Bohdan Durbak, CEO of TheAppSolutions, highlights OpenNLP's practicality:

"Apache OpenNLP is an open-source library for those who prefer practicality and accessibility... you can configure OpenNLP in the way you need and get rid of unnecessary features".

OpenNLP integrates smoothly into patent workflows via its native Java API and support for Apache UIMA (Unstructured Information Management Architecture), a framework for processing unstructured data in enterprise environments. According to the Apache OpenNLP documentation:

"OpenNLP API can be easily plugged into distributed streaming data pipelines like Apache Flink, Apache NiFi, Apache Spark".

This compatibility allows it to work seamlessly with distributed systems, making it suitable for large-scale patent analysis.

Scalability for Large Datasets

OpenNLP is equipped to handle extensive datasets, thanks to its string interning feature, which reduces memory usage when processing large text volumes. For even greater efficiency, users can implement a custom string interner using the -Dopennlp.interner.class flag. When dealing with millions of patent documents, OpenNLP can be integrated with Apache Spark to enable parallel processing across distributed clusters. This setup allows for horizontal scaling by simply adding more nodes as the dataset expands.

Its Java-based design ensures smooth integration with enterprise-level big data systems, and components like LanguageDetectorFactory can be extended to account for the linguistic intricacies of patent language.

Distributed under the Apache License, Version 2.0, OpenNLP is free to use for both commercial and non-commercial purposes. This open-access licensing model removes financial barriers, making it an appealing choice for organizations aiming to build scalable patent analysis systems. These capabilities position Apache OpenNLP as a powerful tool for patent professionals managing prior art detection and other analytical tasks.

7. Google Cloud Natural Language API

The Google Cloud Natural Language API uses BERT, a model trained on over 100 million patent publications, to decode the often complex and context-heavy language found in patents. This foundation enables precise semantic searches and simplifies patent workflow processes.

Semantic Search Capabilities

The API goes beyond simple keyword matching by analyzing a patent's title, abstract, description, and claims to locate related patents and non-patent literature. This approach identifies conceptually similar documents, not just those with matching terms. It also employs entity analysis to categorize prior art, pinpointing technical components, organizations, and dates. As Rob Srebrovic and Jay Yonamine from Google Global Patents put it:

"The patents domain is ripe for the application of algorithms like BERT due to the technical characteristics of patents as well as their business value".

For best results, the API is often used as a "first-pass discovery layer" within a "precision ladder" workflow. This means initial semantic search results are refined using classification codes like CPC or IPC. To further enhance accuracy, users can manually adjust search terms by removing generic words like "system" or "device" and adding more technical, domain-specific terms. These features make it easy to integrate the API into existing patent analysis systems.

Integration with Patent Workflows

The API fits seamlessly into patent workflows via REST and RPC interfaces, allowing developers to create custom tools for prior art detection. It connects with Google Patents Public Datasets and BigQuery, opening up advanced analytics across Google's vast index of more than 87 million patent documents from 17+ authorities. For added functionality, it can be paired with the Vision API for OCR on scanned patents and the Translation API to analyze prior art in multiple languages.

Scalability for Large Datasets

Built on Google Cloud's infrastructure, the API is designed to handle large-scale patent processing with ease. Using the gcsContentUri parameter, it can analyze files stored in Google Cloud Storage, eliminating the need to send large document contents directly in request bodies. When combined with Vertex AI, users gain access to Gemini models for advanced reasoning and summarization of vast, unstructured patent datasets. This scalability ensures efficient processing of even the largest patent repositories. Pricing is based on usage, with new customers receiving $300 in credits to get started.

Feature Comparison

Picking the right NLP tool for prior art detection boils down to three main considerations: search methodology, workflow integration, and pricing. Traditional keyword-based systems often fall short, missing key references due to synonyms or evolving terminology. In this space, Patently stands out by offering a solution that combines ease of use with powerful performance.

Patently uses Vector AI semantic search to deliver top-notch patent analysis. It's built into a collaborative platform that simplifies tasks like patent drafting, project management, and SEP analytics. Pricing starts at $125 per month per user for the Starter plan, making it accessible while still offering enterprise-level features.

Other tools approach prior art detection in different ways. Open-source options, for example, are free but require significant in-house resources and expertise to create effective systems. Pay-as-you-go models, on the other hand, provide flexibility, especially for smaller projects or testing purposes.

When it comes to efficiency, workflow integration plays a critical role. As Kammie Sumpter from DeepIP explains:

"The real differentiator is not raw search depth or analytics dashboards. It is workflow integration".

AI-driven tools can cut search times by up to 80%, all while maintaining over 90% accuracy. Considering that Asia accounts for 68.7% of global patent filings, tools with multi-jurisdictional coverage and machine translation capabilities are essential for thorough prior art detection.

The AI patent search market is expected to grow significantly - from $1.3 billion in 2026 to $5.37 billion by 2035. This reflects a broader shift toward integrated platforms that connect search, drafting, prosecution, and portfolio management. Patently’s comprehensive features are designed to enhance workflow efficiency and improve success rates in patent searches. To truly evaluate NLP tools, it’s often more effective to test them with your own documents and specific rejection patterns, rather than relying solely on feature comparisons.

Conclusion

NLP tools are transforming how patent professionals tackle prior art detection. These tools go beyond basic keyword searches, interpreting the intent and functionality behind technical descriptions. This enables them to identify conceptually similar inventions, even when different terms are used. With over 3.5 million patent applications filed globally each year and the growing complexity of search requirements, traditional methods struggle to keep up.

The benefits of AI-driven tools are clear. They can reduce search times by up to 80% and provide access to patents across more than 100 jurisdictions through automatic translation - a crucial feature, considering that Asia alone accounts for 68.7% of global filings. Additionally, these tools can scan non-patent literature, such as academic journals and conference papers, uncovering public disclosures that might be missed in patent-only searches.

When selecting a tool, it’s essential to test it on your actual cases. Use trial periods to compare results with your current methods. Focus on areas in your workflow that consume the most time, whether it’s initial searches, drafting applications, or responding to office actions. Choose tools that address these specific challenges. Also, ensure the platform has robust security measures like SOC 2 Type II certification and transparent policies about using client data for training models.

AI-powered tools streamline patent research and analysis, boosting overall efficiency. Many firms report seeing a return on investment within 6–12 months by saving attorney hours and offsetting platform costs. Each tool mentioned in this article has unique strengths, so hands-on testing is the best way to find the right fit for your needs. This practical, focused approach is shaping the future of patent research.

FAQs

How does semantic search find prior art when the wording is different?

Semantic search goes beyond matching exact keywords - it focuses on understanding the meaning behind the words. By using vector embeddings, it mathematically maps concepts, allowing it to find related documents based on their semantic content, even when the wording differs. This approach ensures a deeper and more accurate connection between ideas, making it easier to locate relevant prior art.

What data do I need to train a patent-specific NLP model?

To develop an NLP model tailored for patents, you'll need high-quality, labeled data that mirrors the characteristics of patent texts. This includes annotations like classifications, named entities, or legal specifics. Such data helps the model understand the unique language and structure found in patent documents.

How can Patently fit into my current patent search workflow?

Patently fits right into your patent search routine, offering an AI-driven platform designed for searching, reviewing, and drafting patents. With advanced semantic search powered by Vector AI, it goes beyond simple keyword searches, delivering more accurate prior art results. The platform also lets you handle tasks like FTO studies and competitor analyses, organize your data, and collaborate with ease. Plus, built-in tools for drafting claims and creating figures simplify the workflow, boosting efficiency - all within one integrated workspace.