Study: NLP Accuracy in Patent Abstract Summaries

Intellectual Property Management

Mar 12, 2026

Evaluation of LLMs for patent abstract summarization—high semantic scores (BERTScore 0.85–0.89), improved search and drafting, but hallucination and consistency risks remain.

Patent abstracts are critical for understanding inventions, aiding searches, and ensuring legal clarity. This study evaluates how well NLP models perform in generating these summaries compared to human experts. Here’s what you need to know:

Why It Matters: Patent abstracts guide decisions on novelty, patentability, and legal clearance. Errors can lead to misunderstandings or risks.

NLP’s Role: AI is being tested to handle the rising volume of patents, offering speed and scalability that humans alone can’t achieve.

Key Findings:

Applications: NLP can improve patent searches, classification, and AI patent drafting, but human oversight is still necessary.

The research highlights progress in AI summarization but stresses the need for better accuracy and consistency.

Saramsh - Patent Document Summarization using BART | Workshop Capstone

Study Overview and Methodology

Researchers analyzed NLP models using a variety of large-scale patent datasets spanning multiple technological fields. The primary benchmark was the BIGPATENT dataset, which includes 1.3 million U.S. patent documents paired with human-written summaries. These documents are categorized into nine distinct Cooperative Patent Classification (CPC) groups, offering a diverse testing ground for evaluation. Another key resource was the Harvard USPTO Patent Dataset (HUPD), containing utility patent applications filed between 2004 and 2018. This dataset stood out for its detailed metadata and semi-structured format, making it easier to extract sections like the "Summary of the Invention".

To test retrieval performance, the study utilized the CLEF-IP 2013 dataset, which includes patent documents from the European Patent Office and WIPO. This dataset was refined to 24 unique patents, each linked to 2 to 8 manually identified relevant documents. Additionally, the USPTO (Kaggle) Dataset provided 3,343 topic patents to evaluate automated similarity-based relevance. Together, these datasets formed the basis for assessing performance and accuracy across various tasks.

Patent Datasets Used

Unlike traditional news datasets, patent datasets offer more structured discourse and balanced content. On average, patent descriptions are about 2,150 words long, requiring models capable of handling detailed, long-form technical text. For intrinsic evaluations, researchers sampled 1,000 patents from a test set of 67,072 patents available in the BIGPATENT collection.

How Performance Was Measured

The study assessed accuracy using both intrinsic and extrinsic methods. Intrinsic evaluation focused on comparing the generated summaries to reference summaries, using metrics like ROUGE-1, ROUGE-L, BLEU, and BERTScore. Extrinsic evaluation, on the other hand, tested performance in downstream tasks such as classification and retrieval, with metrics like Mean Average Precision (MAP), Precision@k, and Spearman rank correlation.

For example, in retrieval experiments using the CLEF-IP 2013 dataset, automated summaries achieved a MAP@100 score of 35.40%, significantly outperforming standard patent abstracts, which scored 26.31%. These results provided valuable insights into how well NLP models can summarize patent abstracts while maintaining accuracy.

NLP Models Tested

The researchers evaluated both extractive and abstractive summarization models. Extractive models included LexRank, BERT, and SBERT (specifically the "paraphrase-MiniLM-L6-v2" model), which focus on identifying and retrieving key sentences from patent text. Abstractive models, such as BART, T5, PEGASUS, and BigBird-Pegasus, used encoder-decoder architectures to generate concise and coherent summaries. Notably, BigBird-Pegasus stood out for its ability to process sequences of up to 4,096 tokens, surpassing the typical limits of 512 or 1,024 tokens.

The study also explored hybrid approaches, combining extractive filtering with abstractive synthesis. These methods were integrated with EvoPat's multi-LLM system using Retrieval-Augmented Generation, aiming to enhance the quality and relevance of generated summaries.

Key Findings on NLP Accuracy

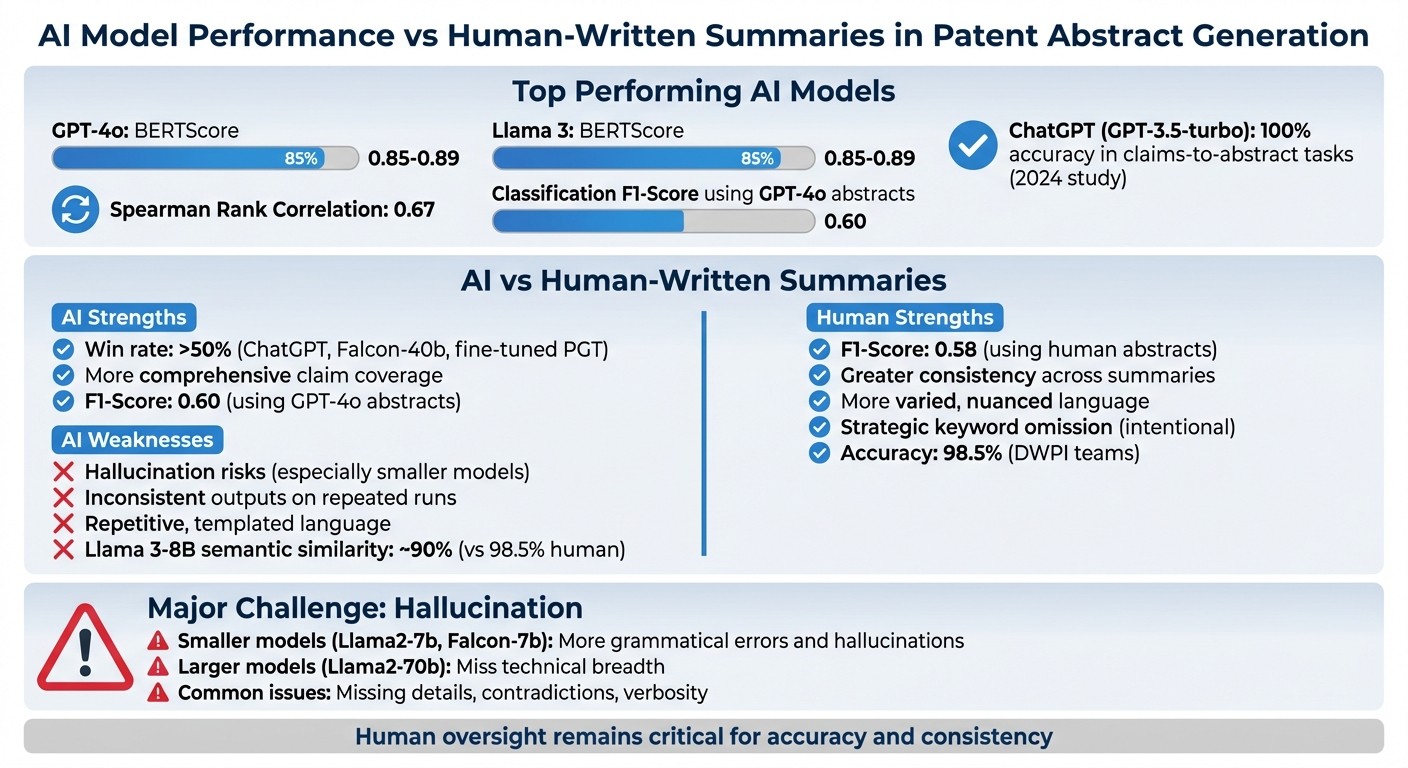

NLP Model Performance vs Human-Written Patent Abstracts: Accuracy Comparison

Accuracy Rates and Hallucination Problems

Large Language Models (LLMs) have shown impressive results in semantic similarity to human-written abstracts, with BERTScores ranging from 0.85 to 0.89. Among these, GPT-4o and Llama 3 stood out as top performers, with GPT-4o achieving a Spearman rank correlation of 0.67 in patent retrieval tasks. In a 2024 study, ChatGPT (GPT-3.5-turbo) excelled in claims-to-abstract tasks, completing them without a single error in the test set.

However, one major challenge remains: hallucination risks. This occurs when models generate information that seems plausible but is factually incorrect. Smaller models, such as Llama2-7b and Falcon-7b, were more prone to basic grammatical mistakes and hallucinations. On the other hand, larger models like Llama2-70b struggled to fully capture the technical breadth of inventions. Common issues included missing critical details, contradictory statements, and overly verbose outputs.

These findings underscore the impressive capabilities of AI models while also revealing their limitations, especially when compared to human-written summaries.

AI vs. Human-Written Summaries

When directly compared, several AI models - such as ChatGPT, Falcon-40b, and fine-tuned PGT - performed on par with or even better than humans in some cases, achieving a "win rate" of over 50%. AI-generated abstracts also boosted downstream task performance. For example, GPT-4o-produced abstracts resulted in a classification F1-score of 0.60, slightly outperforming the 0.58 score achieved using human-written abstracts.

The difference often comes down to strategic intent versus thoroughness. Human-written abstracts sometimes show "incomplete coverage", which can be intentional. Patent drafters may omit certain keywords or details to make patents less discoverable to competitors in databases. In contrast, AI-generated abstracts tend to provide more comprehensive coverage of independent claims.

That said, consistency remains a hurdle for AI. While human experts rely on systematic reviews to maintain uniformity, LLMs can produce varying summaries for the same patent if the prompt is run multiple times, due to their predictive nature. Additionally, AI-generated summaries often rely on repetitive, templated language, whereas human-written abstracts display more variety and nuance. Although AI shows strong potential for tasks like patent search and analysis, human oversight is still critical to ensure accuracy and consistency in abstract summaries.

Applications for Patent Professionals

Better Patent Search and Analysis

Natural Language Processing (NLP) summaries play a key role in improving patent searches by acting as concise, effective queries. These summaries distill essential technical concepts while avoiding the unnecessary complexity of legal jargon.

Platforms like Patently, with its Vector AI technology, take this a step further by using vector-based retrieval systems. Unlike traditional keyword matching, these systems focus on semantic meaning, addressing a major challenge in patent research: over 70% of technical information in patents isn't available from other sources. This semantic approach ensures deeper insights and more accurate search results.

The BERT-refined keyphrase extraction (BRKE) method further enhances this process. It identifies domain-specific technical terms with an F1-score of 52.97%, outperforming KeyBERT by 9.52%. When paired with multi-agent analysis systems, professionals can compare data across a staggering 17.3 million valid patents worldwide, eliminating the need to manually sift through dense technical details. These tools not only simplify searches but also pave the way for more efficient patent drafting.

Faster Patent Drafting

AI-driven drafting tools are transforming how patent abstracts are created. By using NLP, platforms like Patently can generate precise, well-structured abstracts directly from claims. This reduces the workload for attorneys, saving both time and billable hours.

Beyond drafting, AI also improves tasks like patent classification. Summaries generated by large language models (LLMs) from detailed descriptions have boosted subclass-level patent classification scores by about 3 points on benchmark datasets. This demonstrates that AI-generated content isn't just faster - it often enhances related tasks like classification and retrieval. Homaira Huda Shomee, a researcher in the field, highlighted this capability:

"Modern LLMs can generate high-fidelity and stylistically appropriate patent abstracts, often surpassing domain-specific baselines".

These advancements show how AI is reshaping patent processes, making them more efficient and accurate.

Conclusion and Future Directions

Main Takeaways

The research demonstrates that fine-tuned LLMs are approaching human-level performance. For example, in 2025, Amy J.C. Trappey and Yuga Y.C. Lin developed a system using the Llama 3-8B-Instruct model, which achieved nearly 90% semantic similarity to expert-written summaries. While impressive, this still falls short of the 98.5% accuracy achieved by DWPI teams.

There are trade-offs to consider. Fine-tuning improves the ability to mimic patent-specific language but also increases the risk of hallucinations. In practice, this means AI-generated summaries from top patent tools are best suited as starting points, not as finished products. These tools can speed up drafting and searching processes, but human oversight remains essential.

These findings highlight the need for further research to overcome existing challenges.

Areas for Future Research

To maximize AI's potential in patent summarization, addressing its current shortcomings is crucial. A top priority is reducing hallucinations. Current LLMs lack automated systems to ensure abstracts accurately reflect the legal scope of patents. Future advancements should focus on building verification mechanisms that catch factual errors before human review.

Another challenge is long-document processing. With the average patent description exceeding 11,000 tokens, many models struggle to handle such extensive content. Researchers are investigating hybrid approaches that combine extractive methods, like LexRank, with abstractive models, such as BART, to better manage these large, complex documents.

Domain generalization is another pressing issue. Models need to perform reliably across a wide range of fields - from biotechnology to telecommunications - without requiring separate fine-tuning for each domain. As Amy J.C. Trappey explained:

"Applying fine-tuned LLM technology to automatically summarize patent documents has greatly improved the efficiency and accuracy of patent reviews, understanding, and overall IP management".

The next step is ensuring these advancements can deliver consistent results across all patent domains.

FAQs

How can I tell if an AI-generated patent abstract is hallucinating?

To spot inaccuracies, or "hallucinations", in AI-generated patent abstracts, you can use specialized frameworks that evaluate the faithfulness of summaries. These tools are equipped with hallucination detection techniques, which analyze the content for accuracy and highlight any mismatched or incorrect details. This process ensures the generated abstracts stay true to the original information.

Which evaluation metrics matter most for patent abstract quality?

Key factors for assessing the quality of patent abstracts include common NLP metrics like BLEU, ROUGE, and BERTScore. Beyond these, it's important to evaluate how well the abstract holds up against input variations and how effectively it supports related tasks, such as patent classification and retrieval.

When should patent professionals use AI summaries vs human-written abstracts?

AI-generated summaries can help simplify tasks like patent review, classification, and retrieval. They save time by highlighting critical patents quickly. However, human-written abstracts remain crucial for achieving precise, nuanced understanding and maintaining legal accuracy. AI summaries are most effective when used as tools to boost efficiency, with human oversight ensuring the final abstracts are legally sound and thoroughly reflect the invention's key details.