Semantic Mapping in Patent Citation Analysis

Intellectual Property Management

Feb 9, 2026

AI-driven semantic mapping uncovers hidden patent links, improves prior art searches, predicts impact, and streamlines portfolio and competitive analysis.

Semantic mapping is reshaping how patents are analyzed by focusing on the meaning behind patent language rather than relying on keyword matching. This approach uses AI, natural language processing (NLP), and machine learning to identify connections between patents that traditional citation methods often miss. Here's why it matters:

What It Does: Groups related terms and concepts (e.g., "self-driving cars" and "autonomous vehicles") to reveal deeper relationships.

Why It’s Needed: Traditional citation analysis struggles with implicit connections, newer patents with fewer citations, and massive patent databases.

Key Benefits: Improves prior art searches, identifies innovation gaps, and supports better portfolio management.

How It Works: Uses advanced AI models like PatentSBERTa and semantic graphs to analyze text and metadata for meaningful insights.

Semantic mapping enhances patent research by uncovering connections, improving search accuracy, and providing better patent tools for competitive analysis and IP strategy.

Semantic Patents Search Engine

Applications of Semantic Mapping in Patent Research

Semantic mapping has turned patent research into a smarter, more strategic process. By focusing on the meaning behind patent language, it helps organizations uncover insights that shape their R&D priorities, competitive strategies, and intellectual property (IP) investments.

Improving Prior Art Searches

Conducting prior art searches can feel like navigating a maze, mainly because inventors often describe similar concepts with different terms. For instance, a pharmaceutical compound might be listed by its chemical name in one patent and its commercial name in another. Semantic mapping cuts through this confusion by identifying paraphrased descriptions and conceptual overlaps that conventional keyword searches often miss.

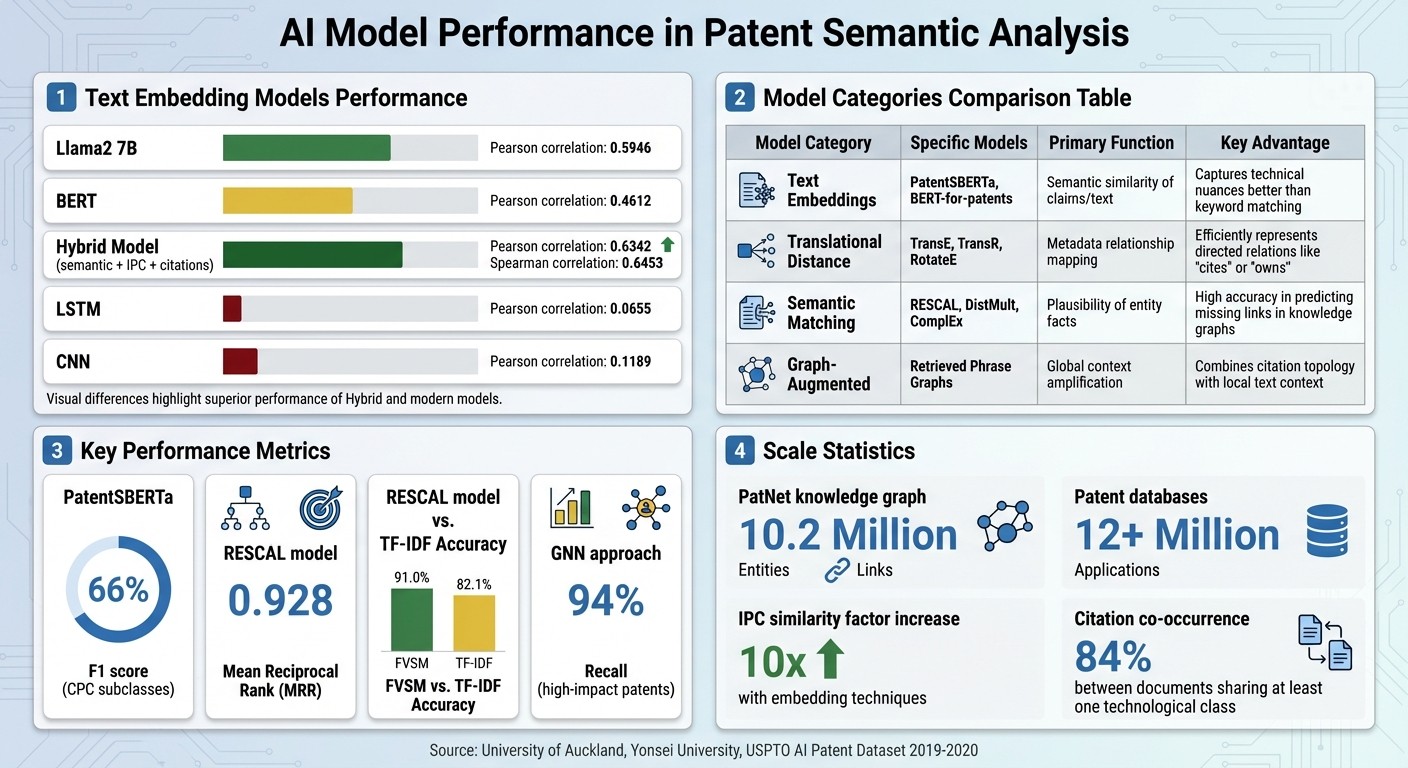

Take performance evaluations as an example: the Llama2 7B model achieved a Pearson correlation of 0.5946, significantly outperforming BERT's 0.4612. When semantic analysis was combined with IPC codes and citation data, accuracy increased further to 0.6342. In contrast, older models like LSTM and CNN lagged far behind, with correlations of just 0.0655 and 0.1189.

"Semantic mapping enhances the efficiency of this process by analyzing the semantic content of patents to identify relevant prior art." - Minesoft

This boost in accuracy isn't just technical - it has real-world implications. By uncovering patents with similar concepts (even when worded differently), companies can more reliably assess whether their inventions are novel and nonobvious, ultimately reducing the risk of infringement.

Technology Scouting and Competitive Analysis

Semantic mapping isn’t just about finding prior art; it’s also a powerful tool for technology scouting and competitive analysis. By analyzing meaning rather than relying on keywords, it overcomes the limitations of older methods.

One standout capability is identifying gaps in the patent landscape. Semantic mapping calculates the "outlierness" of documents, spotting patents with low local density in a semantic map. These outliers often represent untapped opportunities for innovation. This matters because, according to the World Intellectual Property Organization (WIPO), effective patent analysis can cut R&D time by 60% and reduce research costs by 40%.

It also tracks competitors' strategies using Subject-Action-Object (SAO) structures. These structures map the functional relationships in patents, revealing not only what competitors are patenting but also how they’re solving technical challenges. As Janghyeok Yoon and Kwangsoo Kim explain:

"A patent network based on semantic patent analysis using subject-action-object (SAO) structures is proposed to identify the technological importance of patents, the characteristics of patent clusters, and the technological capabilities of competitors."

This approach acts as an early warning system. By monitoring how competitors' inventors diversify, companies can detect when rivals are exploring new technology areas - often before these efforts become public knowledge through product launches or announcements.

Portfolio Management and IP Strategy

Beyond search and competitor analysis, semantic mapping plays a crucial role in portfolio management and IP strategy.

For companies with extensive patent portfolios, semantic mapping organizes assets into meaningful clusters based on their technical content. These clusters reveal strengths, gaps, and redundancies in research. This insight helps businesses decide which patents to keep, license, or sell.

"Patent similarity measurement... can not only efficiently derive technical information, but also detect patent infringement risks and evaluate whether inventions meet novelty and innovation criteria." - Yongmin Yoo et al., University of Auckland

The benefits extend to mergers and acquisitions. During due diligence, semantic mapping evaluates the technical alignment between a target company’s IP and the acquirer’s portfolio. This analysis uncovers potential synergies or conflicts, offering a clearer picture of the acquisition’s value. For investors, it provides more than just a patent count - it reveals how the technologies within a portfolio connect and complement one another.

Another advantage is resource optimization. By grouping related IP assets, semantic mapping helps R&D teams avoid duplicating efforts and allocate resources more effectively. Traditional classification systems often fall short, as the average number of IPC codes per patent is just 1.602. Semantic mapping bridges this gap by capturing the full scope of a patent's technical relationships.

Patently leverages these advanced semantic mapping techniques to provide actionable insights for prior art searches, technology scouting, and portfolio management.

Technical Foundations of Semantic Mapping

AI Model Performance Comparison for Patent Semantic Analysis

Semantic mapping, with its transformative role in prior art searches and competitive analysis, owes its effectiveness to advanced AI models and a sophisticated technical framework. These systems transform patent text into meaningful connections, enabling deeper insights.

Core Techniques and Processes

The process begins with breaking down patent text into individual words, tagging their grammatical roles, and reducing them to root forms to uncover their core meaning. The system then clusters related words into technical phrases. For instance, "semiconductor fabrication process" conveys a specific idea that "semiconductor" alone does not capture. These phrases are evaluated using two metrics:

Unithood: A measure combining frequency and word length.

Termhood: A chi-squared score assessing how keyword patterns deviate from randomness.

Next, the system builds weighted networks by analyzing how often these phrases co-occur across patents. Generic terms are filtered out, ensuring that only meaningful relationships remain. This refinement is crucial since 84% of patent citations occur between documents sharing at least one technological class.

"Semantic mapping for patent analysis involves using natural language processing (NLP) and machine learning techniques to understand and categorise the content of patents based on their textual descriptions, protections, claims, and other relevant information." - Minesoft

By combining semantic analysis with bibliographic data - like citations, inventors, and classification codes - the system creates a more comprehensive picture than text analysis alone.

AI Models and Algorithms

The true strength of semantic mapping lies in AI models that convert patent language into mathematical vectors. Transformer-based models such as PatentSBERTa, BERT-for-patents, and Sentence-BERT (SBERT) excel at representing patent text in high-dimensional spaces. These models are fine-tuned on patent-specific datasets, allowing them to handle complex technical and legal language.

For example, PatentSBERTa achieved an F1 score of 66% in predicting CPC subclasses, surpassing earlier classification models. Using these vectors, the system calculates semantic similarity through cosine similarity, which measures how closely two vectors align.

For metadata like citations and inventors, knowledge graph embedding models - such as TransE, TransR, and RESCAL - create another layer of representation. These models capture relationships between entities. In one study, the RESCAL model achieved a Mean Reciprocal Rank (MRR) of 0.928 when predicting patent relationships within the PatNet knowledge graph, which includes over 10.2 million entities and 106.8 million links spanning decades of USPTO data.

Model Category | Specific Models | Primary Function | Key Advantage |

|---|---|---|---|

Text Embeddings | PatentSBERTa, BERT-for-patents | Semantic similarity of claims/text | Captures technical nuances better than keyword matching |

Translational Distance | TransE, TransR, RotateE | Metadata relationship mapping | Efficiently represents directed relations like "cites" or "owns" |

Semantic Matching | RESCAL, DistMult, ComplEx | Plausibility of entity facts | High accuracy in predicting missing links in knowledge graphs |

Graph-Augmented | Retrieved Phrase Graphs | Global context amplification | Combines citation topology with local text context |

Given the immense size of patent databases - often exceeding 12 million applications - efficient search algorithms are essential. Nearest-neighbor approximation techniques enable systems to quickly identify semantically similar patents without comparing every possible pair. Patents sharing the same IPC class show a similarity increase by a factor of nearly 10 when analyzed using these embedding techniques.

"Text Embedding is a vital precursor to patent analysis tasks." - Hamid Bekamiri, Daniel S. Hain, Roman Jurowetzki

Some systems take it further by constructing "phrase graphs" that link patents to their citation networks. These graphs merge local text context with the global context of citation relationships, offering a dual perspective that highlights both the content of a patent and its role in the broader technological ecosystem.

Data Quality and Validation

The accuracy of these models depends heavily on the quality of the input data. Preprocessing steps include cleaning metadata from sources like USPTO or PATSTAT, disambiguating inventors and assignees, and filtering out erroneous citation links.

Validation is equally rigorous. A 2023/2024 study by the University of Auckland and Yonsei University tested a hybrid semantic model using 420 patent pairs from the 2019-2020 USPTO Artificial Intelligence Patent Dataset. A panel of three scientists and a legal expert assessed the model using a 7-point scale across five criteria: Area, Task, Purpose, Methodology, and Application.

To ensure consistency, any patent pair with a variance score above 8 was re-evaluated by the legal expert. This meticulous process resulted in a model achieving a Pearson correlation of 0.6342 and a Spearman correlation of 0.6453, outperforming standalone models like Llama2 (0.5946) and BERT (0.4612).

"If patent similarity is measured considering only the bibliographic information of the patent, it may lead to inaccurate results... due to citation hysteresis." - Yoo et al.

Common validation metrics - such as Mean Rank (MR), Mean Reciprocal Rank (MRR), and Hits@k - help quantify how well models predict target entities like cited patents. These benchmarks ensure that advancements in model design translate into practical improvements.

Patently's Vector AI exemplifies these techniques, leveraging advanced embeddings and rigorous validation to provide precise semantic search results, moving far beyond traditional keyword-based methods. These technical foundations not only enhance the accuracy of semantic mapping but also influence how patents are cited and analyzed.

Impact on Patent Citation Practices

Semantic mapping is changing the way patent professionals handle citations, making the process smarter and more meaningful. Instead of relying on keyword searches or manual classification, it digs into the actual meaning behind patent language. This shift not only speeds up searches but also changes how patents are evaluated for relevance.

Finding Semantically Relevant Citations

Semantic mapping excels at connecting patents that describe the same idea using different terms. For example, it can link a patent that mentions "autonomous vehicles" with another referring to "self-driving cars." Traditional keyword searches often miss these connections. This is particularly important in fields like pharmaceuticals, where the same compound or therapy might be described in entirely different ways depending on the industry.

By cutting down prior art search times by 60-70%, semantic mapping allows professionals to spend more time assessing relevance rather than sifting through terminology. It also uncovers functional relationships that standard classification systems like IPC or CPC often overlook.

"AI-powered semantic search enables concept-based searching that goes beyond keyword matching... automatically expanding searches to capture all relevant patents regardless of terminology." - Patsnap

Data supports the effectiveness of this approach. Semantic classes show a much stronger tendency for similar patents to cite each other compared to traditional classification systems (0.085 vs. 0.009). Additionally, the Feature Vector Space Model (FVSM) achieves 91.0% accuracy in identifying similar patents, outperforming older methods like TF-IDF, which only hit 82.1%.

Semantic mapping also brings clarity to citation types. Research published in the Strategic Management Journal in October 2025 highlights that in-text citations - those embedded in technical descriptions - align more closely with the actual knowledge flow between inventors. These citations often indicate meaningful technological linkages rather than legal formalities added by examiners. This distinction helps R&D teams focus on citations that truly matter for innovation.

Patently's Vector AI is one example of how semantic techniques are being applied. It delivers concept-based search results that cut through varied terminology, making keyword limitations a thing of the past.

Assessing Patent Quality Through Citation Analysis

Semantic mapping doesn’t just improve search accuracy - it also changes how patent quality is judged. Traditional citation analysis struggles with "citation hysteresis", where newer patents are unfairly penalized because they haven’t had enough time to gather citations. Semantic mapping sidesteps this issue by evaluating the conceptual alignment between patents, offering a more age-neutral quality measure.

In November 2024, researchers Rabindra Nath Nandi, Suman Kalyan Maity, Brian Uzzi, and Sourav Medya introduced a Graph Neural Network (GNN) approach in the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing. Their method used semantic graphs based on text similarities to predict which patents would receive high citation counts. This approach achieved an impressive 94% recall, showing that semantic analysis can effectively forecast a patent's future significance.

"The patent citation count is a good indicator of patent quality." - Rabindra Nath Nandi et al.

This capability enables patent professionals to identify important innovations early, even before citation networks mature.

Assessment Factor | Traditional Citation Analysis | Semantic Mapping Analysis |

|---|---|---|

Primary Indicator | Raw citation counts | Conceptual alignment & semantic graphs |

Timing Limitation | Biased toward older patents | Evaluates quality regardless of age |

Knowledge Flow | Often reflects legal input | Reflects genuine inventor knowledge |

Predictive Accuracy | Limited by citation hysteresis | Up to 94% recall for high-impact patents |

The future lies in combining semantic mapping with traditional bibliographic data - citations, inventors, and assignees. This hybrid approach offers a deeper understanding of technological relationships and impact. It allows patent professionals to make smarter decisions about portfolio strategies, licensing, and competitive positioning based on the quality of connections, not just the volume of citations.

The Future of Semantic Mapping in Patent Analysis

Semantic mapping is evolving far beyond simple keyword matching, stepping into a realm of more sophisticated capabilities. The next wave of patent analysis tools will lean heavily on Pretrained Language Models (PLMs) and Large Language Models (LLMs) to handle complex tasks like patent classification and retrieval. This shift paves the way for systems that don’t just retrieve patents but also interpret and synthesize legal insights.

A major transformation in this field is the rise of agentic systems, fundamentally changing how patent professionals work. These systems are designed to autonomously interpret inventions, analyze prior art iteratively, and generate structured legal intelligence. As Thomas Chazot, Head of Growth Marketing at DeepIP, puts it:

"Agentic search represents a new approach... Rather than acting as a passive retrieval tool, an agentic system autonomously interprets an invention, explores the prior art landscape through iterative reasoning, and produces structured legal intelligence."

Another exciting development is the use of graph-augmented embeddings, which connect patent language to broader citation networks. This approach enriches the analysis by incorporating global contextual information, surpassing what localized text analysis alone can achieve. For example, in December 2025, researchers at the Max Planck Institute for Innovation and Competition introduced Pat-SPECTER, a cross-corpus language similarity model. This tool enabled simultaneous semantic comparison of English scientific publications and patents, effectively tracing how knowledge flows from academic research to industrial applications.

Hybrid approaches are also gaining traction, blending semantic similarity with bibliographic and technical metadata. This combination consistently delivers more accurate results, particularly when dealing with specialized content like chemical formulas, technical diagrams, and niche terminology. By integrating diverse data types, these methods address the limitations of purely text-based analysis.

Companies like Patently are already embracing these advancements. Their Vector AI feature, for instance, offers concept-based semantic search, breaking through terminology barriers to deliver precise results. With the global patent database now exceeding 150 million documents, the ability to conduct accurate, context-aware analysis is becoming a cornerstone for competitive intellectual property strategies, technology scouting, and portfolio management. These innovations are pushing patent analysis from basic retrieval toward proactive, actionable legal intelligence.

FAQs

How does semantic mapping enhance prior art searches in patent analysis?

Semantic mapping improves prior art searches by finding links between concepts, even when they’re described using different terms. This approach helps identify related patents, pinpoint overlapping technologies, and ensures a more thorough and precise search process.

Using semantic mapping, patent professionals can lower the chances of overlooking crucial prior art, which enhances the accuracy and dependability of their analysis.

How do AI models like PatentSBERTa enhance semantic mapping in patent analysis?

AI models, like PatentSBERTa, play a key role in creating detailed embeddings of patent text, including claims. These embeddings allow for identifying similarities between patents, paving the way for advanced tools like semantic search and automated classification.

With these AI-powered insights, professionals can pinpoint connections between patents with greater efficiency. This not only boosts search precision but also simplifies tasks such as prior art analysis and organizing patent portfolios.

How does semantic mapping uncover innovation opportunities in patent portfolios?

Semantic mapping shines a light on opportunities for new ideas by examining how concepts and technologies relate to one another within patents. It highlights hidden connections and overlapping technologies, helping to pinpoint gaps in current portfolios and areas with potential for fresh inventions.

This approach not only improves the precision of patent searches but also uncovers relevant prior art that might otherwise go unnoticed. These insights play a crucial role in guiding strategic decisions and planning for innovation.