How Predictive Models Spot Emerging Patent Technologies

Intellectual Property Management

Feb 28, 2026

AI-driven patent models reveal high-impact technologies 12–24 months before market entry, turning IP data into strategic advantage.

AI-powered predictive models are transforming how companies identify emerging technologies through patents. These tools analyze vast datasets, uncovering trends and opportunities up to 18-24 months before technologies hit the market. By leveraging AI, businesses gain a 12-18 month lead, enabling proactive decisions and market positioning.

Key Takeaways:

Why Patents Matter: Patents reveal future technologies and provide insights into areas ripe for innovation.

AI's Role: AI tools, like PatentBERT, outperform manual methods by cutting search times by 60%-80% and improving prediction accuracy by 73%.

How It Works: Models analyze patent data, research publications, and funding trends to identify growth areas and anomalies.

Real-World Impact: Top AI-driven patent platforms save time, improve patent classification, and help organizations find untapped opportunities.

AI isn’t just speeding up patent analysis; it’s making it smarter. Companies using these tools can identify trends early, act decisively, and outpace competitors.

How Predictive Models Work in Patent Analysis

Data Sources for Model Training

Predictive models rely on vast datasets to accurately identify emerging technologies. These datasets are primarily sourced from global patent offices like the USPTO, EPO, and WIPO, as well as specialized databases such as PATSTAT, PatentsView, and the Derwent World Patent Index. These sources provide structured information, including patent titles, abstracts, full-text claims, and metadata like Cooperative Patent Classification (CPC) codes, filing dates, and citation networks.

In addition to patent data, modern models incorporate insights from scientific publications, funding trends, standards activities, and open-source developments. For instance, in 2024, China saw about 1.8 million patent applications filed, with over 70% of the detailed content in these documents being unique to those filings.

Algorithms and Techniques Used

Advanced machine learning techniques, particularly transformer-based models like BERT, RoBERTa, PatentBERT, and SciBERT, are central to patent analysis. These models are fine-tuned specifically for the language and structure of patent documents. In December 2025, the USPTO updated its AIPD 2023 system, replacing Word2Vec with "BERT for Patents" and introducing active learning techniques. Active learning focuses on refining the model's performance in areas of uncertainty, significantly improving precision to 68.18% and recall to 78.95%, compared to the earlier recall rate of just 21.05%.

A notable example of these advancements is the 2024 SciencesPo Paris initiative led by Mohamed Keita. Using PatentBERT, Keita classified 88 million patent entries with 99% precision and recall on a test set. Impressively, the entire corpus was processed in just 11 hours on standard cloud infrastructure.

"BERT-based models are capable of capturing semantic nuances and handling lexical variability. This makes them especially effective for patent classification, where the same technological concept can be described using vastly different terminologies." - Mohamed Keita

These sophisticated algorithms are essential for identifying trends and spotting anomalies in patent data.

How Models Identify Trends and Anomalies

Once trained, predictive models analyze filing patterns and citation networks to uncover trends. For example, a surge of more than 30% annually in specific classification codes can signal the emergence of new technology. Citation genealogy further highlights how innovations evolve, with groundbreaking technologies often appearing at the intersection of different fields.

To detect anomalies, these systems combine supervised and unsupervised learning techniques. Supervised models classify patents into established technology categories using IPC and CPC codes, while unsupervised clustering uncovers entirely new areas of innovation that lack formal classifications. Additionally, Graph Neural Networks (GNNs) map citation and inventor relationships, and Convolutional Neural Networks (CNNs) analyze technical drawings to identify visual trends in patent schematics. This multi-layered approach helps pinpoint technologies that deviate from established norms, often signaling disruptive innovations.

Can Patent Portfolio Tracking Predict Future Tech Trends For Legal Tech? - Legal And HR SaaS Stack

Step-by-Step Guide to Spotting Emerging Technologies

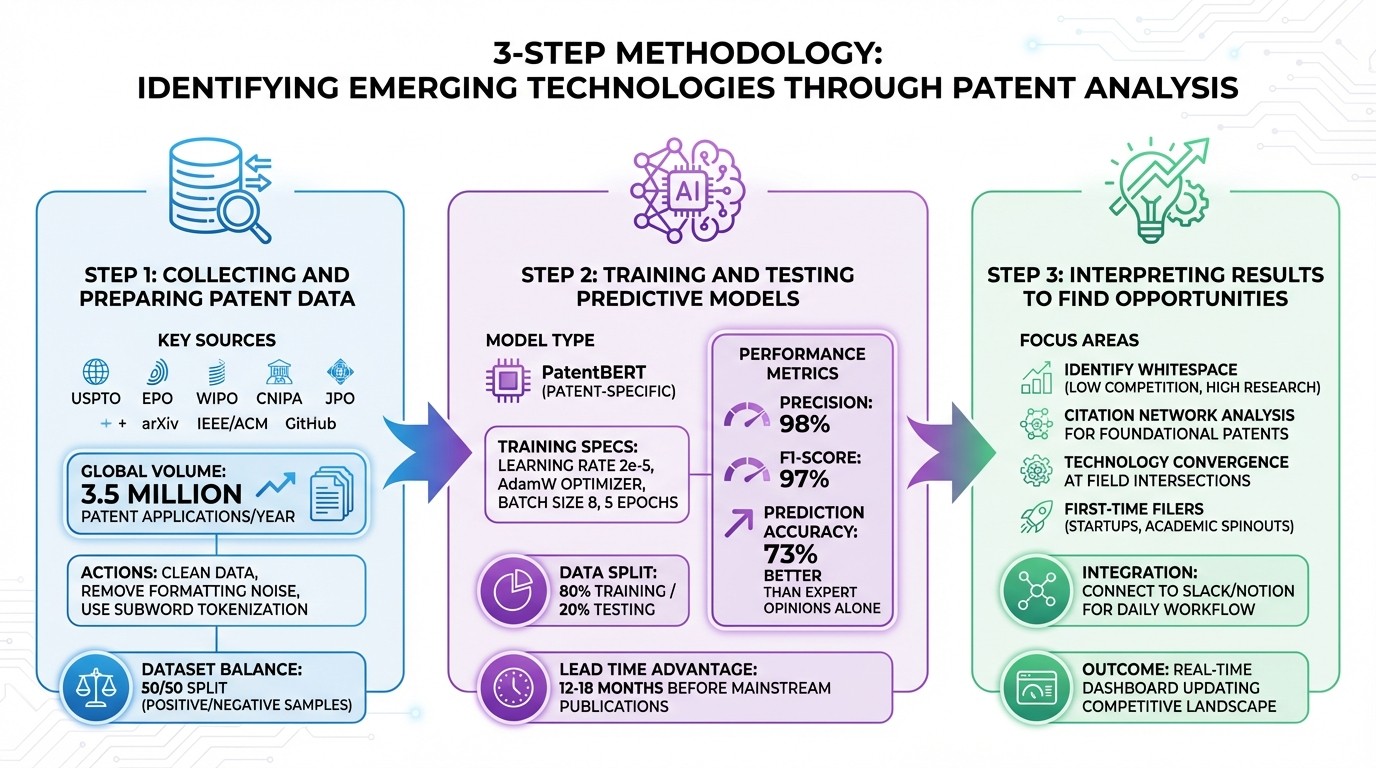

3-Step Process for Spotting Emerging Patent Technologies with AI

Collecting and Preparing Patent Data

Start by gathering patent data from global offices like USPTO, EPO, WIPO, CNIPA, and JPO, along with non-patent literature (NPL) sources such as arXiv, IEEE/ACM, and GitHub. With over 3.5 million patent applications filed globally each year, casting a wide net ensures you capture early-stage innovations that might not yet be on the radar of larger patent offices.

Once you've collected the data, the next step is cleaning and preprocessing. Patent abstracts often include unnecessary formatting and irrelevant characters, so it's essential to remove this noise. Subword tokenization can help manage rare or compound technical terms. For instance, translating branded algorithm names into plain, descriptive language allows your model to better align with the broader terminology used in public filings.

Building a balanced training dataset is key to achieving accuracy. Begin with keyword-based extraction to identify potentially relevant patents, followed by semi-automated labeling using tools like the OpenAI API to confirm or reject their relevance. Aim for an even mix of positive (target technology) and negative (unrelated) samples, ensuring the model can effectively differentiate between relevant and irrelevant innovations. Each invention should be described by its problem, technical solution, and alternative approaches to give the model multiple ways to identify related prior art.

Once your dataset is cleaned and balanced, you’re ready to configure and train your predictive model.

Training and Testing Predictive Models

The training process starts with selecting the right model. Patent-specific models like PatentBERT are ideal because they’re designed for the long, technical, and domain-specific nature of patent documents. Fine-tune the model using a learning rate of 2e-5, the AdamW optimizer, small batch sizes (around 8), and about 5 epochs to avoid overfitting.

Split your dataset into an 80/20 ratio - 80% for training and 20% for testing on unseen data. For example, in one case study, a fine-tuned PatentBERT model achieved a Precision of 0.98 and an F1-Score of 0.97 when identifying AI-related patents. Use metrics like Precision, Recall, and F1-Score to evaluate the model's performance. Cross-check predictions with external indicators such as scientific publication trends, venture capital investments, and standards development activity. Studies show that combining patent citation and funding data can lead to 73% more accurate predictions compared to relying solely on expert opinions.

The first model run serves as a calibration round to refine language and address any gaps. Organizations using patent intelligence tools can spot technology trends 12 to 18 months before they hit mainstream publications.

Once the model is validated, its outputs can guide strategic opportunity analysis.

Interpreting Results to Find Opportunities

After generating model predictions, focus on identifying areas of whitespace - regions with minimal patenting activity but accelerating research. These are often high-growth, low-competition opportunities. AI models can cluster related technologies, revealing where competition is dense and where untapped potential exists.

Conduct citation network analysis to find foundational patents. Technologies with high citation counts often serve as the backbone for entire industries.

"A well-built landscape is more like a dashboard that keeps updating in real time, revealing the shape of your competitive and technological surroundings as they shift." - PowerPatent

Look for technology convergence by analyzing cross-citations between different classification codes. Innovations often emerge at the intersection of distinct fields. Pay attention to first-time filers in your area, such as startups or academic spinouts, as they could be potential collaborators or acquisition targets. To make these insights actionable, integrate intellectual property signals into your team’s workflow using generative AI patent tools or platforms like Slack and Notion. This ensures that patent intelligence becomes part of daily operations rather than a separate legal task.

Using AI Platforms Like Patently

AI platforms like Patently are changing the game for patent analysis, making searches more precise and improving how teams collaborate.

Semantic Search and Vector AI

Traditional patent searches often miss the mark because they rely on exact keyword matches. Imagine one patent uses the term "Drone", while another opts for "Unmanned Aerial Rotocraft." Without a direct keyword match, valuable connections can be lost. Patently’s AI-powered semantic search bridges this gap by converting queries into numerical data, enabling it to spot related patents even when the wording differs.

This approach, combined with modern hybrid workflows, maximizes efficiency. First, Vector AI casts a wide net to ensure no stone is left unturned. Then, Boolean filters refine the results based on specifics like filing dates, classification codes, or patent status. This method not only uncovers technical links across industries and languages but also offers a clear return on investment. For instance, professional AI patent tools typically cost about $200 per month. If such a tool saves 10 hours of legal work billed at $300 per hour, it quickly pays for itself.

"Hidden prior art detection software relies on semantic understanding, not vocabulary matching."

Golam Rabiul Alam, PhD, Patent AI Lab

These capabilities build on predictive models, delivering insights that patent professionals can act on with confidence.

Data Analysis and Team Collaboration

AI platforms like Patently go beyond search, streamlining team collaboration and portfolio management. With features such as hierarchical categorization, customizable fields, and secure access controls, managing patent portfolios becomes much more efficient. For example, Patently’s Standard Essential Patent (SEP) analytics module provides structured, citation-backed claim charts for technologies like 5G and Wi-Fi. This helps with large-scale infringement or validity analyses.

The results speak for themselves: A top Am Law 100 firm reduced patent search time by 80%, cutting 100 billable hours down to just 20. Similarly, a biotech company saved 10–15 hours per patent application. Patently also offers tools like forward and backward citation browsers for deep citation analysis, while its collaboration features allow teams to share findings, annotate results, and export reports. These tools ensure that patent intelligence becomes a seamless part of daily workflows.

Real-Time Insights for Decision-Making

Real-time insights are another game-changer, turning patent strategies from reactive to proactive. Continuous monitoring alerts users to competitor filings, providing an early warning of potential market shifts before new products are even launched. Automated clustering groups related innovations, highlighting areas of concentrated activity and identifying "whitespace" opportunities - those unexplored areas with minimal patenting but growing research activity.

Research shows that companies using tools like these can spot technology trends 12 to 18 months before they hit mainstream. Acting on these insights can lead to significant advantages, with early adopters achieving up to 2.5 times higher revenue growth. By leveraging real-time insights, patent professionals can stay ahead of the curve, identifying and capitalizing on emerging technologies before the competition does.

Measuring Predictive Model Performance

Evaluating how well a predictive model performs is crucial to ensure you're not overlooking new technologies or triggering unnecessary alerts.

Metrics for Model Validation

At the heart of model evaluation are four key metrics: accuracy, precision, recall, and F1-score. Here's what each one tells you:

Accuracy: The percentage of correct predictions overall.

Precision: How many of the patents flagged as relevant truly are, helping cut down on irrelevant results.

Recall: The ability of the model to identify all significant patents - essential for spotting early signs of new technologies.

F1-score: A single measure that balances precision and recall.

When dealing with multiple classes, macro-averaging and micro-averaging offer different perspectives. Macro-averaging treats all classes equally, making it more effective for evaluating performance on rare or emerging subclasses. In contrast, micro-averaging aggregates predictions across all classes, which tends to favor well-established, high-volume fields. For identifying emerging technologies, macro-averaging is the go-to method because it ensures smaller, less frequent classes aren't overshadowed by dominant ones.

A practical example of this comes from Mohamed Keita's 2025 study at SciencesPo Paris. His team fine-tuned a PatentBERT model on 7,000 labeled patents (split evenly between AI and non-AI categories) using a V100 GPU. When tested on 1,400 new patents, the model achieved impressive results: 94% accuracy, 93% precision, 95% recall, and a 94% F1-score. Similarly, PatSeer's AI Classifier reached 95% accuracy when tested on "Gold Standard Datasets" for Quantum Computing and Cannabinoid Edibles.

However, classification metrics alone aren't enough. Efficiency is just as important. Metrics like inference time, memory usage, and energy consumption (including CO2 emissions) become critical when processing millions of patents. Studies show that encoder-based models, such as BERT or PatentSBERTa, can be up to 1,000 times more efficient than Large Language Models (LLMs) in terms of speed and energy use. With these considerations in mind, the next step is to evaluate different model architectures for patent trend analysis.

Comparing Different Models

Evaluating model performance goes beyond just validation metrics. You also need to weigh the strengths and weaknesses of different architectures.

Encoder-Based Models: Models like BERT and PatentSBERTa excel in processing speed and energy efficiency, making them ideal for analyzing high-volume, well-established technology fields. However, they often struggle with rare subclasses and "long-tail" technologies, especially when training data is imbalanced.

Large Language Models (LLMs): Models like Qwen-2.5-7B shine when it comes to identifying rare patent subclasses. They offer greater semantic flexibility and perform well in zero-shot or few-shot scenarios. That said, they come with trade-offs: slower processing times, higher computational demands, and a larger energy footprint.

"Quality matters as much as quantity." - GetFocus

A hybrid approach often delivers the best results. For high-volume, established fields, encoder models are the most practical choice due to their speed and efficiency. For rare or emerging technologies, LLMs are invaluable for their ability to handle nuanced, semantic understanding. Techniques like Retrieval-Augmented Generation (RAG), which incorporates official CPC definitions into LLM prompts, are increasingly used to boost classification precision in technical fields.

When comparing models, focus on macro-averaged F1 scores. This ensures you're not just optimizing for established fields but also capturing the emerging ones that matter most. Striking the right balance between speed and recall is essential for accurately predicting future trends.

Conclusion: The Future of Patent Analysis with Predictive Models

Key Takeaways

Predictive models have revolutionized how patents and academic journals are analyzed, scanning millions of documents in mere seconds. Unlike traditional keyword searches, these systems uncover conceptually related inventions, revealing opportunities that might otherwise go unnoticed. This shift transforms patent monitoring from a tedious legal task into a proactive tool for product development - a dynamic "map" of innovation that identifies trends and opportunities before competitors even file.

Today’s machine learning models can forecast patent outcomes with an accuracy exceeding 80%. Generative AI tools are also accelerating early-stage R&D, cutting timelines by as much as 30% to 50%. Platforms like Patently are leading this charge, combining semantic search powered by Vector AI, AI-assisted drafting, and real-time collaboration to move teams away from manual searches and toward strategic, high-level analysis.

"Utilizing artificial intelligence tools is not just about speeding up the patent process - it's also about enhancing the quality of work at every stage of the process."

Menlo Park Patents

The most effective strategy remains a Human-in-the-Loop (HITL) approach. Here, AI handles the heavy lifting - scale, speed, and pattern recognition - while human experts provide critical legal judgment and strategic insights. This partnership allows professionals to identify gaps in underserved technology areas, align research with market needs, and make informed decisions about global patent filings. Together, these advancements sharpen current strategies and open doors to future innovations.

What's Next for AI-Powered Patent Analysis

The future of patent analysis is poised for even greater changes. By 2035, the patent analytics market is projected to grow to $15.69 billion, with an annual growth rate of 8.06%. Meanwhile, the broader large language model (LLM) market could reach $82.1 billion by 2033. These investments will drive advancements in AI's ability to identify disruptive technologies.

Emerging systems will introduce "litigation foresight", offering risk scores to identify patents vulnerable to disputes before conflicts arise. AI will also integrate cross-modal data, extracting chemical structures from PDFs, interpreting algorithms on GitHub, and linking concepts across languages and legal systems. Human-in-the-Loop systems will remain critical, blending AI's scale with expert oversight. The vision is for "Agentic AI", an intelligent layer that supports the entire innovation process - from idea generation to patent portfolio management.

"The future is not one of AI replacing human experts, but of a powerful symbiosis. AI will manage the scale and speed of data, while humans provide the indispensable strategic oversight, ethical judgment, and nuanced interpretation."

This transformation is already underway. AI is now mentioned in over 1.8 million patent documents worldwide, with annual growth exceeding 35%. Organizations that embed these tools into their workflows - treating intellectual property monitoring as an integral part of innovation rather than a legal afterthought - will gain a competitive edge. With predictive models, they can identify trends and opportunities 18 to 24 months ahead of market launches. The real question is no longer if predictive models will be adopted, but how quickly they can be integrated into your innovation strategy.

FAQs

What makes a technology “emerging” in patent data?

Emerging technologies are identified in patent data when they are in their early development phases and exhibit a surge in patent filings. These technologies typically stand out due to their originality and potential to create a strong impact. AI-powered tools, such as semantic analysis, play a key role in spotting these trends. They analyze clusters of patents with increasing activity or distinct characteristics, even when different terms are used to describe similar concepts.

How much labeled data is needed to train a reliable patent model?

Training an effective patent model doesn’t always demand a massive amount of labeled data. Studies show that even just 24 examples can yield impressive results when combined with strategies like active learning, which targets the trickiest cases. For production-level performance, a well-curated dataset of 100–500 examples is often enough. This underscores a key point: quality matters more than quantity when it comes to data.

How do I turn model outputs into real R&D or IP decisions?

To transform AI-generated predictions into actionable steps for R&D or intellectual property (IP) decisions, it's crucial to dig deeper into the data. Start by analyzing predictions to uncover emerging technologies and patent trends that could shape future opportunities. AI tools can play a major role here, helping assess the potential for innovation, guide where to focus R&D efforts, and decide which patents to prioritize for filing.

But don’t rely on AI alone. Pair these insights with expert judgment to evaluate key factors like market demand, the competitive patent landscape, and the practical feasibility of new ideas. This combination ensures that your strategic decisions are not only data-driven but also aligned with your organization’s broader goals.