How to Measure Patent Similarity with Vectors

Intellectual Property Management

Feb 17, 2026

Use vector embeddings (TF-IDF, Doc2Vec, transformers) to measure patent similarity: data prep, cosine scoring, clustering, and IPC validation.

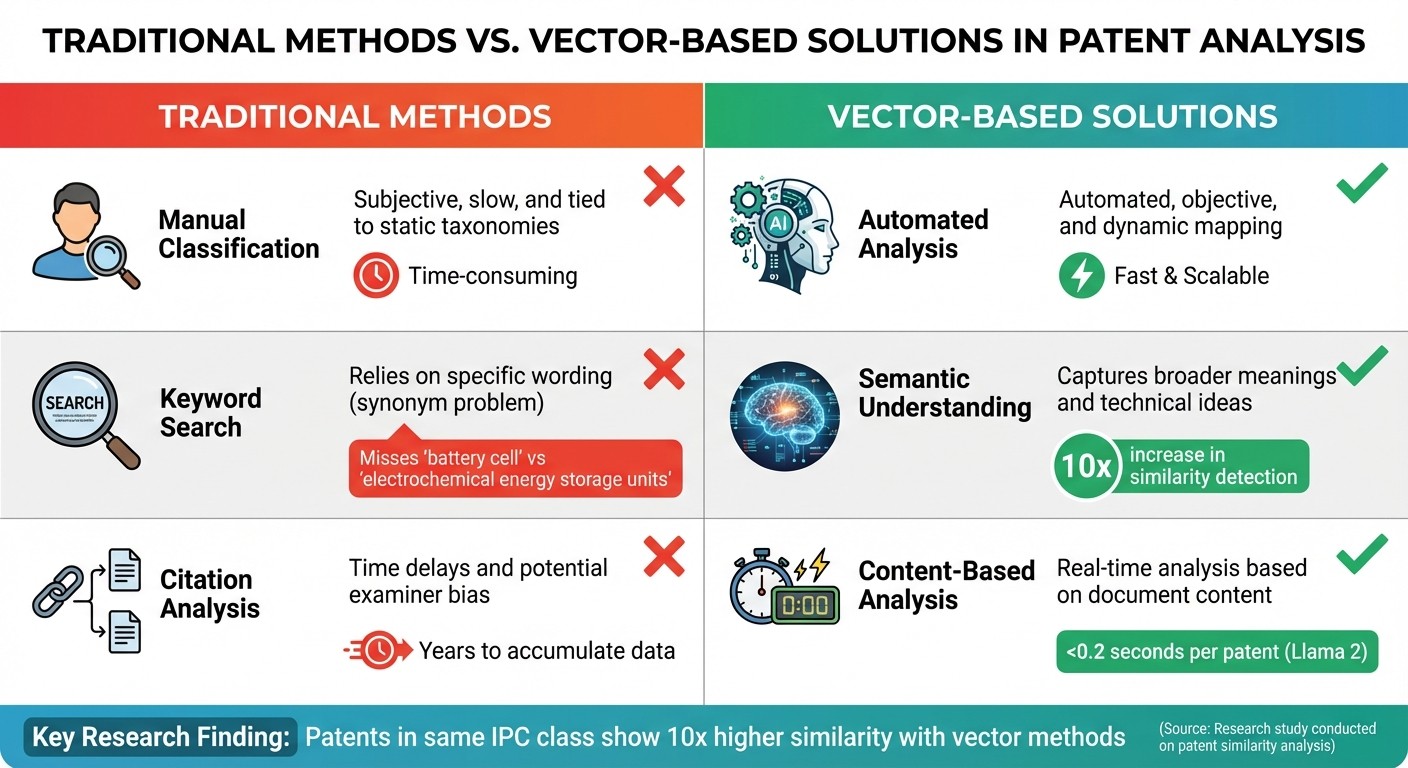

Patent similarity measures how closely patents align in terms of content and concepts. Traditional methods like manual classification, keyword search, and citation analysis often fall short due to issues like subjectivity, synonym mismatches, and time delays. Vector-based models provide a faster, scalable, and more accurate solution by converting patent text into numerical vectors for comparison.

Key takeaways:

Why vectors work better: They analyze the meaning behind technical language, overcoming synonym issues and capturing subtle details.

Top tools: Models like Llama 2 and PatentSBERTa outperform older methods in speed and accuracy.

How it works: Patent text is cleaned, key sections (claims, abstracts) are extracted, and text is converted into vectors. Similarity is measured using metrics like cosine similarity.

Real-world impact: Faster, more precise patent searches, classification, and clustering.

Platforms like Patently simplify this process with tools for semantic search, clustering, and visualization, making patent analysis more efficient.

How Vector Similarity Search Works?

Why Use Vector-Based Models for Patent Similarity

Traditional vs Vector-Based Patent Analysis Methods Comparison

Vector-based models are changing the game in patent analysis. Unlike traditional manual classification systems, which rely on experts to assign patents to fixed categories, these models automatically interpret the meaning behind technical language. This shift tackles some of the biggest challenges in patent analysis.

One major advantage is how these models handle varied terminology. Patent attorneys often use different styles and terms to describe the same concept. Vector models solve this by identifying that different phrases can represent the same idea. This capability leads to stronger semantic understanding, as explored further below.

Benefits of Semantic Understanding in Patents

Vector models are particularly skilled at picking up the subtle technical details in patent claims and descriptions. For instance, in April 2022, researchers Daniel S. Hain, Roman Jurowetzki, Tobias Buchmann, and Patrick Wolf used text-embedding techniques to analyze patents in the electric vehicle sector. By combining natural language processing (NLP) embedding with nearest-neighbor approximation, they mapped knowledge flows and developed patent quality indicators that outperformed older, citation-based measures.

When comparing patents within the same International Patent Classification (IPC) class, embedding techniques showed a tenfold increase in similarity compared to keyword-based methods. This improvement happens because vector models understand technical language in its full context. Instead of treating words as standalone entities, models like BERT and Llama 2 analyze how terms interact within claims and descriptions. This deeper semantic insight makes similarity measurements much more accurate.

"As the vectorization representation approach can more comprehensively characterize the semantics of text and has more advantages in large-scale data processing, it has been widely used in patent text analysis to measure patent semantic similarity recently." – Jiazheng Li, Institute of Computing Technology, Chinese Academy of Sciences

Patent language is notoriously complex - dense, technical, and formulaic. Large Language Models like Llama 2 tackle this challenge by maintaining token-level precision while understanding the broader technical meaning. A study of Chinese patents from 2010 to 2024, covering over 5.3 million documents, showed that Llama 2 consistently outperformed models like BERT, BART, and traditional TF-IDF across all technical fields.

Limitations of Traditional Methods

Despite their long history, traditional methods for analyzing patent similarity have serious flaws. Manual classification systems, such as those based on the IPC, rely on human experts to assign patents to predefined categories. This process is slow, subjective, and resource-heavy.

"Current measures of patent similarity rely on the manual classification of patents into taxonomies." – Kenneth A. Younge and Jeffrey M. Kuhn

Keyword-based searches also face major hurdles. The "synonym problem" means that different terms can describe the same concept, while identical terms can have different meanings depending on context. For example, a search for "battery cell" might miss patents that refer to "electrochemical energy storage units", even though both describe the same technology. As Byunghoon Kim explained, "the drawback of these [keyword] approaches is that they depend on word choice and writing style of authors". This limitation can cause important patents to be overlooked simply because they use different wording.

Citation analysis, another common method, has its own set of issues. Citations take years to accumulate and often reflect examiner preferences or legal requirements rather than technical relevance. In 2012, Tsuyoshi Ide developed a statistical prediction model for patentability using text mining on 300,000 patent applications. His model, which analyzed specification text, outperformed traditional methods that relied solely on citations or classifications.

Method Type | Primary Limitation | Vector-Based Solution |

|---|---|---|

Manual Classification | Subjective, slow, and tied to static taxonomies | Automated, objective, and dynamic mapping |

Keyword Search | Relies on specific wording (synonym problem) | Captures broader meanings and technical ideas |

Citation Analysis | Time delays and potential examiner bias | Real-time analysis based on document content |

Vector-based models overcome these traditional limitations by automating processes and offering deeper semantic insights. They allow for pairwise comparisons across entire patent databases, replacing static systems with dynamic networks of technology. For example, Llama 2 can calculate patent similarity in under 0.2 seconds per sample using GPU acceleration. This speed makes large-scale semantic analysis practical for daily patent-related tasks, streamlining data processing in ways that older methods simply can't match.

Preparing Patent Data for Vectorization

To measure patent similarity using vectors, you first need to prepare your patent data in the right format. This preparation is crucial for ensuring that your vector models can accurately capture the technical relationships between patents. With the USPTO database containing over 10 million patents, efficient data extraction methods are essential. The quality of this preparation directly influences the accuracy of your analysis.

Start by cleaning and deduplicating your data. This step ensures consistency and eliminates errors that could skew results. Perform exploratory data analysis using descriptive statistics to identify anomalies in the text fields before converting them into embeddings. These steps lay the groundwork for extracting the most relevant sections of each patent.

Extracting Key Patent Sections

Not every part of a patent document is equally useful for similarity analysis. Certain sections carry more weight due to their technical and legal significance:

Claims: These outline the legal and technical scope of the invention, making them critical for identifying specific technical details.

Abstracts: These provide concise summaries, ideal for initial semantic matching.

Descriptions: These offer a complete technical disclosure, essential for a deeper understanding of the invention.

"Patent documents typically use legal and highly technical language, with context-dependent terms that may have meanings quite different from colloquial usage." – Grigor Aslanyan, Software Engineer, Google

A good starting point is combining the patent title and abstract, but including claims and descriptions allows for a more detailed view of the invention's technical scope. Interestingly, patent pairs that share the same IPC class show a nearly tenfold increase in similarity compared to those that do not.

After identifying these key sections, the next step is applying text processing techniques to preserve their technical nuances.

Text Preprocessing Techniques

Processing patent language requires a specialized approach, as standard text cleaning methods often fall short. Begin by removing non-semantic characters and punctuation. Pay attention to patent-specific stop words like "said", "and", or "or", which can add unnecessary noise to the vector space. Instead, focus on extracting technical nouns and functional phrases, as these better represent concept similarity. Stemming or lemmatization can also help by treating variations of the same term - like "electrification" and "electrified" - as equivalent.

Finally, validate your preprocessed data against IPC or CPC classifications to ensure that similarity clusters align with technical logic. This step is key to scaling efficiently for vector-based analysis.

Generating Patent Vectors

Once your patent text is preprocessed, the next step is to convert it into numerical vectors for analysis. The choice of vectorization method will depend on the size of your dataset and the level of precision you need.

Traditional methods, such as Bag-of-Words (BoW) and TF-IDF, rely on word counts and statistical significance to represent patents. These approaches are great for initial filtering of large datasets. However, they often fail to capture semantic relationships. For instance, terms like "battery" and "energy storage" might not be identified as related.

Neural embedding methods, including Word2Vec and Doc2Vec, take this a step further by learning word contexts. Doc2Vec, in particular, generates a single vector for an entire patent document, capturing more semantic meaning than keyword-based methods. Some hybrid approaches even combine TF-IDF with Word2Vec to highlight critical technical terms.

Transformer-based models represent the latest advancements in this field. These models use attention mechanisms to understand the contextual meaning of patent text, making them especially effective for analyzing claims and descriptions. Domain-specific models like PatentSBERTa and Bert-for-patents are fine-tuned for the technical language used in patents.

"PatentSBERTa, Bert-for-patents, and TF-IDF Weighted Word Embeddings have the best accuracy for computing sentence embeddings at the subclass level." – Hamid Bekamiri

In October 2025, researchers Jiazheng Li, Jian Zhou, and Mengyun Cao tested six vectorization models using 5,343,200 Chinese patents from 2010 to 2024. Their study revealed that Llama 2 outperformed other models, including BERT and BGE, achieving inference speeds of less than 0.2 seconds per sample on an Nvidia A800 GPU.

"The performance of Llama 2 is the best among six compared models in all years and in all technical fields." – Jiazheng Li, Institute of Computing Technology, Chinese Academy of Sciences

Let’s now dive into the most commonly used vectorization techniques, ranging from statistical approaches to advanced neural models.

Common Vectorization Techniques

The methods for vectorizing text vary widely, from straightforward statistical techniques to more advanced neural models. TF-IDF remains a popular choice for its speed and ability to emphasize less common technical terms while ignoring frequent legal jargon. Other statistical methods, like Latent Semantic Indexing (LSI) and Latent Dirichlet Allocation (LDA), group patents into thematic topics, making them useful for identifying patterns in large datasets.

Doc2Vec provides a balanced approach by generating document-level embeddings that capture semantic relationships without requiring GPU resources. This method is particularly effective in addressing the "synonym problem", where different terms describe the same technology.

For massive datasets, such as the USPTO or PATSTAT databases with around 12 million patent applications, tools like Annoy (Approximate Nearest Neighbors Oh Yeah) are often used to speed up computations. In 2022, researchers Daniel S. Hain and Roman Jurowetzki applied TF-IDF weighted Word2Vec embeddings with Annoy to build a scalable similarity network for electric vehicle patents, enabling them to trace knowledge flows effectively.

Selecting the Right Technique for Your Needs

With these techniques in mind, choosing the right one depends on your goals and computational resources. TF-IDF is a solid option for quick filtering, especially when working with millions of patents on CPU-only systems. It’s efficient even with vocabularies exceeding 20,000 terms.

For more detailed analysis, PatentSBERTa or Bert-for-patents are excellent choices. These models excel at identifying closely related innovations or conducting prior art searches using top patent tools, as they are optimized for technical text. On the other hand, conversational embeddings like BGE may struggle with the nuances of patent language.

When accuracy is your top priority, large language models like Llama 2 are the way to go. They deliver exceptional precision alongside fast inference times.

It’s also important to consider which section of the patent you're analyzing - claims, abstracts, or full descriptions - as performance can vary depending on the focus. Finally, validating your method against established IPC or CPC classifications can help ensure that your vectors accurately capture technological relationships. For example, patents sharing the same IPC class often exhibit a tenfold increase in similarity compared to random pairs.

Measuring Patent Similarity Using Vectors

Once you've created vectors for your patent documents, the next step is figuring out how similar they are. One popular method for this is cosine similarity, which measures the angle between two vectors. What's great about this approach is that it focuses on the direction of the vectors rather than their length. This makes it perfect for comparing patent texts of different lengths, like a detailed patent description and a shorter abstract, without biasing the results toward one or the other.

The formula for cosine similarity is:

$\cos(\theta) = \frac{A \cdot B}{|A| |B|}$

Here, the dot product of the two vectors is divided by the product of their magnitudes. The resulting similarity score ranges from -1 to +1:

+1 means the vectors are perfectly aligned (maximum similarity).

0 indicates no relationship (orthogonal vectors).

Negative scores, while mathematically possible, are uncommon in patent text analysis.

This metric is a solid starting point for deeper analysis of how patents relate to one another.

Using Cosine Similarity for Comparisons

Cosine similarity works hand-in-hand with vector embeddings by converting high-dimensional data into actionable rankings. However, there’s a catch: avoid using fixed thresholds like "scores above 0.6 mean similarity." The range of scores can vary widely depending on the vectorization model you use. Instead, treat similarity as a ranking task - focus on how patents compare to one another rather than sticking to rigid cutoff points.

For example, in November 2025, Dataquest used 500 research papers from arXiv to build a semantic search system. They generated 1,536-dimensional embeddings with Cohere's embed-v4.0 model and used scikit-learn's cosine_similarity function. A query for "query optimization algorithms" returned a top score of 0.4206, marking the best match in their dataset.

"The manual threshold varies using different vectorization models and weakens the objectivity of the evaluation on patent semantic similarity." – Jiazheng Li, Jian Zhou, and Mengyun Cao, Chinese Academy of Sciences

To ensure meaningful results, align your similarity scores with the International Patent Classification (IPC) hierarchy. Patents with longer shared IPC prefixes (e.g., same subgroup vs. same section) should naturally have higher similarity scores. This offers a clear benchmark for assessing whether your calculations are capturing real technological connections.

Implementing Similarity Calculations with Python

Now that the theory is clear, let's talk about implementation. Python provides several libraries to compute patent similarity, each tailored for different needs:

NumPy: Great for manual implementations if you want to dive into the math behind cosine similarity.

Scikit-learn: Perfect for batch processing large datasets. Its

cosine_similarityfunction handles vector broadcasting and reshaping automatically.Gensim: Ideal for those using Doc2Vec or Word2Vec models trained on patent text, offering built-in methods for finding similar documents.

Annoy: For massive datasets, like comparing a single patent to the entire USPTO database, Annoy (Approximate Nearest Neighbors Oh Yeah) speeds up similarity searches by approximating nearest neighbors.

One important tip: if your vectors are normalized to unit length, the dot product becomes equivalent to cosine similarity - making computations faster. Also, when running similarity searches, remember to filter out the query patent itself, as it will always score a perfect 1.0. After calculating similarity scores, sort the results in descending order to identify the Top-N most similar patents.

Metric | Range | Best Use Case | Key Advantage |

|---|---|---|---|

Cosine Similarity | -1 to +1 | Text embeddings/NLP | Ignores document length; ideal for semantic search |

Dot Product | $-\infty$ to $+\infty$ | Normalized vectors | Fast computation; equivalent to cosine when normalized |

Euclidean Distance | 0 to $+\infty$ | Scientific/geometric data | Measures straight-line distance; lower values = more similar |

Analyzing and Visualizing Results

After calculating similarity scores, the next step is turning those numbers into a meaningful analysis of patent relationships. This process uses vector similarity data to uncover technology clusters and connections. By grouping and validating these raw figures, you can extract actionable insights from the patent landscape.

Clustering and Dimensionality Reduction

To organize patent vectors into groups, K-means clustering is a practical starting point. Using Euclidean distance, you can segment patents into clusters - say, setting K=10 to divide 1,000 patents into distinct technological groups. These clusters can then be analyzed over time to track trends, like the rise or decline of specific fields. A patent map - essentially a visual snapshot of the technological landscape - can highlight knowledge flows and pinpoint key patents driving innovation in emerging areas [22, 4]. For a more advanced approach, Bayesian clustering uses probability distributions (e.g., Gaussian or Laplace) to handle high-dimensional data, addressing challenges like the curse of dimensionality.

For visualization, t-SNE (t-distributed Stochastic Neighbor Embedding) is a powerful tool to reduce high-dimensional vectors into 2D or 3D plots. This makes complex data easier to interpret. However, its effectiveness relies on fine-tuning the perplexity parameter, typically set between 5 and 50, to balance local and global data relationships. As Martin Wattenberg from Google Brain explains:

"The perplexity value has a complex effect on the resulting pictures... Getting the most from t-SNE may mean analyzing multiple plots with different perplexities."

It’s worth noting that t-SNE can distort cluster sizes and distances by equalizing density. For very high-dimensional data, applying PCA (Principal Component Analysis) beforehand can help reduce noise and speed up computations.

Once clusters are visualized, the next step is to validate them against established patent classifications. This ensures your groupings align with recognized technological relationships.

Validating Results Against Patent Classifications

To ensure your visual groupings reflect real-world technological connections, compare them to the International Patent Classification (IPC) system. Patents with longer shared IPC prefixes (e.g., the same subgroup rather than just the same section) often show higher similarity scores. In fact, patents within the same IPC class can exhibit up to a tenfold increase in technological similarity.

The Rank Consistency Index (RCI) is a helpful metric for validation. It measures how well your model’s similarity rankings align with IPC-based standards. A large-scale study analyzing over 5.3 million patents (spanning 2010–2024) found that models like Llama 2 outperform traditional approaches like TFIDF across various technical domains.

Another validation method is citation network analysis. If two patents cite the same prior art, they are likely related. Additionally, benchmarking your results against USPTO "102 rejection" cases - where examiners identify legally recognized similar patent pairs - provides further confirmation of your model’s accuracy [24, 5].

Using Patently for Patent Similarity Analysis

Patently simplifies patent similarity analysis by using cutting-edge vector-based techniques, creating a streamlined, end-to-end process. While custom solutions for vector-based patent analysis exist, Patently combines these methods into a single, user-friendly platform. The goal? To make patent management more efficient and focus your attention on actionable insights.

Patently's Vector AI Capabilities

Patently’s semantic search is driven by advanced Vector AI, which understands the meaning behind patent language rather than just matching keywords. As Patently describes:

"Our advanced search feature, powered by Vector AI, makes finding relevant patents faster, more intuitive, and incredibly precise."

The platform’s Smart Search feature takes it a step further by allowing users to upload files in various formats - text, images, PDFs, and Office documents - to create a comprehensive query based on your technical concept. On top of that, Patently employs its proprietary Graph AI to deliver highly accurate patent searches without the need for complicated Boolean logic. This approach minimizes human error and reduces the time spent refining search queries. These innovations don’t just improve search but also streamline the entire patent management process.

Integrating Patent Workflows with Patently

Patently doesn’t stop at search - it integrates vector-based similarity analysis into the broader patent workflow. This includes support for tasks like clustering and semantic searches. You can organize your similarity projects using hierarchical categories, such as by client, matter, or technology area, with automated updates every 30 days.

For collaboration, the platform centralizes workflows with shared comments, asset-level ratings, and robust access controls, including ethical walls. It also offers customizable Word or Excel report exports for easy sharing. Additionally, the Patently Know feature provides a visual map of patent families, making it easier to trace relationships between related intellectual property assets.

Conclusion

Vector-based patent similarity measurement has shifted the focus from manual classification to automated semantic analysis. As Kenneth A. Younge and Jeffrey M. Kuhn noted, "Current measures of patent similarity rely on the manual classification of patents into taxonomies... we develop a machine-automated measure of patent-to-patent similarity... [that] significantly improves upon existing patent classification systems". This approach dives deeper than simple keyword matching, uncovering the technical concepts hidden within patent language.

Selecting the right model is essential for achieving accurate results. Research involving over 5.3 million patents demonstrated that Large Language Models (LLMs) like Llama 2 consistently outperformed older methods and pre-trained models across various technical domains. That said, traditional TFIDF remains a strong contender for lengthy, technical patent texts, especially when computational resources are limited or restricted to CPU-only environments.

To implement this effectively, start by preprocessing patent data - concatenate titles and abstracts for better representation. Choose your vectorization model based on the resources you have available. LLMs should be your go-to for precision, but TFIDF is a reliable alternative when speed and efficiency are priorities. Finally, validate your results against IPC benchmarks to ensure accuracy. These steps create a solid foundation for precise and efficient patent similarity analysis.

Platforms like Patently simplify this process with their Vector AI-powered semantic tools and Smart Search capabilities. By handling the technical heavy lifting, Patently integrates smoothly into your workflow, allowing you to focus on interpreting results and making strategic decisions about your patent portfolio.

Whether you're conducting prior art searches, mapping out competitive landscapes, or evaluating risks, vector-based similarity measurement provides a scalable and objective solution. The technology is proven, the tools are ready, and adopting this approach can offer a clear edge in modern patent analysis.

FAQs

Which patent sections should I vectorize for the best similarity results?

For the best similarity results, concentrate on vectorizing patent sections rich in detailed text and technical information. Focus on key areas like the abstract, claims, and description, as these sections typically offer the most thorough and relevant data for comparison.

How do I pick the right embedding model for my dataset and hardware?

When selecting an embedding model, focus on how well it aligns with your dataset's domain and whether its accuracy benchmarks meet your needs for capturing critical semantic features. Keep in mind your hardware capabilities - some models demand substantial computational power. Experimenting with different models can help you find the right balance between performance and resource usage. This is especially important for tasks like patent similarity analysis, where generating high-quality text embeddings is crucial.

How can I validate that my similarity scores are actually meaningful?

To make your similarity scores meaningful, it's important to rely on objective evaluation methods. Consider using established frameworks designed to assess the effectiveness of vectorization models. You can also validate accuracy by comparing your scores against benchmark datasets like the Patent Similarity Dataset or manually classified patent pairs. These methods offer practical insights into how accurately your scores represent true semantic similarity.