How AI Improves Metadata Standardization

Intellectual Property Management

Feb 24, 2026

AI automates patent metadata standardization—improving classification, multilingual translation, semantic search, and predictive analytics to boost search accuracy and cross-jurisdictional consistency.

AI is transforming how metadata is managed in the patent industry, solving issues like inconsistency, poor formatting, and incomplete data. By automating classification, improving semantic search, and handling multilingual data, AI ensures patent metadata is more accurate and reliable. Here's what you need to know:

Metadata Challenges: Inconsistent terminology and classification errors reduce search accuracy. For example, mistakes like misclassifying "lung" as "lung cancer" can obscure critical data.

AI Solutions: Tools like BERT for Patents and GPT-4-based models analyze patent texts, assign codes, and improve recall rates from 17.65% to 62.87%.

Global Standardization: AI bridges jurisdictional differences in metadata formats, links patent families, and integrates multilingual data for unified searches.

Efficiency Gains: Automated systems process thousands of patents quickly, reducing manual effort and improving precision.

AI doesn't replace human expertise but enhances it, enabling professionals to focus on higher-level tasks like legal strategy and portfolio management. Tools like Patently, one of the top patent tools available, integrate these advancements, offering features such as semantic search and citation navigation to streamline workflows.

How Enterprises Uses Generative AI for Patent Search, Drafting & Classification | IP Author Webinar

How AI Transforms Metadata Standardization

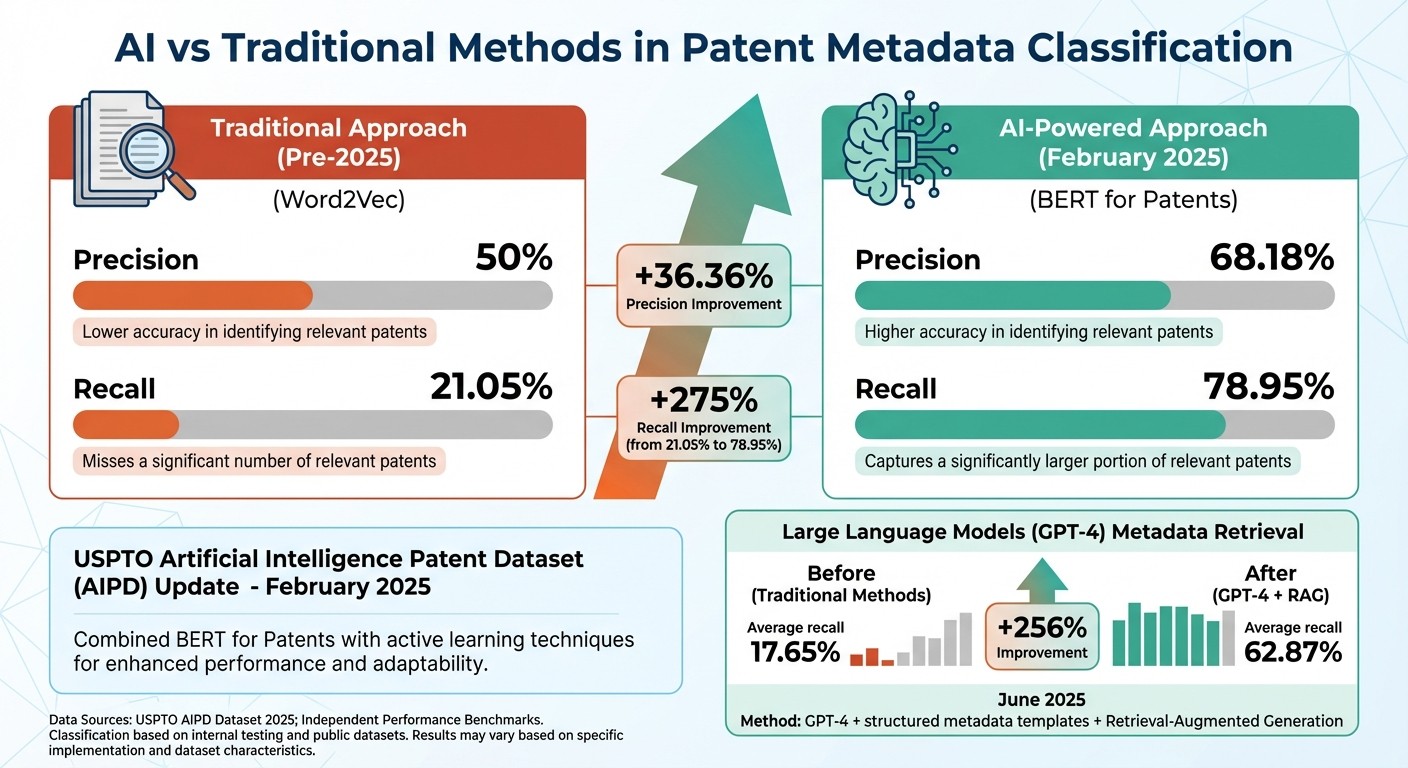

AI vs Traditional Methods: Patent Metadata Classification Performance Comparison

Inconsistent metadata has long been a hurdle for global patent searches. Now, AI is stepping in to provide a streamlined, high-precision solution. By using Natural Language Processing (NLP) and transformer-based models, AI automates the process of analyzing patent texts and assigning classification codes. This marks a departure from older methods like Word2Vec, with advanced models such as BERT for Patents offering a much deeper semantic understanding.

In February 2025, the USPTO introduced an updated Artificial Intelligence Patent Dataset (AIPD), which combined BERT for Patents with active learning techniques. The results were impressive: precision in identifying AI-related patents jumped to 68.18%, with recall reaching 78.95%. Comparatively, the earlier approach only managed 50% precision and 21.05% recall.

"The key to success in automating prior art search in patent research using artificial intelligence (AI) lies in developing large datasets for machine learning (ML) and ensuring their availability."

– Boris Genin, Division for the Design of Information Search Systems, Federal Institute of Industrial Property

AI is also revolutionizing cross-database research. Between December 2025 and January 2026, researchers Boris Genin and Alexander Gorbunov developed an infrastructure that created 15 million semantic clusters - 14 million for U.S. patents and 1 million for Russian patents. These clusters highlight thematic relationships, making it easier to uncover prior art even when terminology varies.

Large language models (LLMs) are another game-changer. In June 2025, researchers showed that combining GPT-4 with structured metadata templates increased average metadata retrieval recall from 17.65% to 62.87%. By integrating official classification definitions through Retrieval-Augmented Generation, these systems ensure that classification codes align closely with the patent text’s context.

Automated Classification and Tagging

AI-driven systems now handle the complex task of assigning IPC and CPC codes automatically. These systems analyze the full text of patent documents, not just titles or abstracts, to understand the technical language in context. By combining textual analysis with existing classification labels, they generate embeddings that capture both the semantic and structured aspects of the content. This automation processes thousands of patents in the time it would take a person to review just a few, allowing professionals to focus on higher-level tasks like strategy and portfolio management.

Semantic Search and Clustering

AI also enhances patent research through semantic search. Unlike basic keyword matching, semantic search interprets the meaning behind patent claims, making it possible to identify related patents even when different terminology is used. This capability is vital for patent landscaping, where patents are grouped by technical or application-based criteria. The Federal Institute of Industrial Property’s creation of 15 million semantic clusters is a prime example of how semantic search can quickly reveal the state of the art in any field.

Large Language Models for Contextual Metadata

LLMs are pushing metadata management even further. While traditional encoder-based models excel with well-defined, frequent categories, LLMs shine in handling "long-tail" areas - those rare or emerging technologies that older models might miss.

In April 2024, researchers introduced PatentGPT, a specialized LLM for intellectual property tasks. When tested on the 2019 China Patent Agent Qualification Examination, PatentGPT scored 65 points, matching human experts and outperforming general-purpose models like GPT-4 in IP-specific scenarios. Its Sparse Mixture of Experts architecture allows it to process lengthy patent texts efficiently.

"These results underscore the transformative potential of combining advanced language models with symbolic models of standardized metadata structures for more effective and reliable data retrieval."

– Sowmya S. Sundaram, Researcher

For patent professionals, a hybrid approach works best: use encoder-based models for high-volume, standard tasks and reserve LLMs for nuanced, emerging areas where context is critical. These advancements are setting the stage for more efficient and reliable patent metadata management.

Cross-Jurisdictional Metadata Consistency

Patent metadata differs significantly across jurisdictions. Offices like the USPTO, EPO, JPO, and CNIPA each have their own formatting rules, classification systems, and terminology. Today, AI-driven tools are stepping in to streamline this process. These systems not only standardize metadata within individual jurisdictions but also translate and integrate it across global patent systems. The result? Unified frameworks that connect related patents worldwide while accurately translating technical language.

Family Linking for Multi-Jurisdictional Patents

AI-powered multilayer network frameworks are transforming how patent families are linked across jurisdictions. Instead of isolating data from each patent office, these frameworks connect applications with shared priorities, offering a global view of protection strategies. They also capture citation metadata in distinct layers, identifying the issuing office and citation type. This method reveals technological relationships that would otherwise go unnoticed in single-jurisdiction analyses.

For example, in November 2024, LexisNexis PatentSight+ used AI visualization to analyze Tesla's patent family EP3776696.A1 for dry electrode technology. The system traced its origins to a U.S. provisional application (US201862650903.P) filed in March 2018. It then mapped its progression to non-provisional filings in China, Europe, Japan, and South Korea on March 28, 2019. This analysis showcased Tesla's strategic approach to securing a first-to-file advantage and refining claims through divisional and continuation applications in key electric vehicle markets.

The USPTO's February 2025 AIPD update highlights the scale of family linking. Using the "national family" definition - which connects applications sharing at least one priority across offices - researchers applied BERT for Patents to 15.4 million documents. They then used a family-citation-family expansion method to identify all related family members and their forward and backward citations, creating a cross-jurisdictional metadata web. These frameworks are a game-changer for overcoming language barriers in global patent searches.

Multilingual Neural Translation

AI also tackles language barriers with neural translation models trained specifically on patent datasets. The ParaPat corpus, for instance, features over 68 million sentences and 800 million tokens across 74 language pairs. Similarly, EuroPat offers parallel data for six official European languages, with the Spanish-English pair alone containing 51 million sentences.

These models enable semantic searches by focusing on conceptual meanings rather than literal keywords. For instance, terms like "mobile handset" and "wireless communication device" are linked across languages, enhancing metadata consistency in patent searches. This capability is critical for capturing all prior art and reducing the risk of patent infringement. Neural translation tools powered by AI have been shown to cut research time by up to 70%.

"AI-based systems can provide accurate translations of technical and legal jargon across multiple jurisdictions, effectively dismantling language barriers that typically impede global patent searches."

– DrugPatentWatch

One of the standout benefits of neural translation is its ability to preserve technical terminology during the translation process. This ensures that metadata fields like IPC/CPC codes and chemical structures remain accurate - something general-purpose translation tools often struggle with. With the global patent analytics market expected to grow from $1.13 billion in 2024 to $3.03 billion by 2032, these AI-driven tools are becoming indispensable for managing the 3.5 million new applications filed each year, alongside the 150 million existing patent documents.

Predictive Analytics for Metadata Management

With advancements in metadata classification and efforts to standardize across jurisdictions, AI-powered predictive analytics now tackles inconsistencies as they arise, instead of waiting for them to disrupt searches. These systems work by monitoring filing patterns, automating quality checks, and flagging anomalies in real time, ensuring smoother data operations.

One standout technique is confidence scoring, which assigns probability levels to metadata classifications and tags. If a score dips below a set threshold - commonly 90% - the system generates alerts for human review. This method is far more efficient than traditional manual audits. For context, manual data entry typically produces 100–400 errors per 10,000 inputs, while AI-driven categorization has achieved accuracy rates above 90%.

Another key element is the use of active learning techniques, particularly for cases near "decision boundaries" where AI struggles with categorization. By retraining models on these flagged instances, the system becomes progressively better at classifying metadata, reducing the need for exhaustive manual reviews.

Predictive analytics also leverages semantic search and automated classification tools to track filing velocities and analyze citation networks. This helps identify trends and differentiate between groundbreaking patents and incremental improvements. For example, a surge in patent filings within a specific technology signals a wave of innovation, while a plateau suggests market maturity. This insight allows organizations to make informed decisions about which patents to maintain and which to abandon, potentially cutting portfolio costs by up to 30%.

A practical application of this approach can be seen in the work of the Stanford Center for Biomedical Informatics Research in February 2025. Using GPT-4 and structured CEDAR templates, researchers standardized 4,800 records from BioSample and GEO repositories. Sowmya S. Sundaram and Mark A. Musen corrected mismatches like moving "lung cancer" from a tissue field to a disease field, boosting average search recall from 17.65% to 62.87%. This example highlights how predictive analytics can address metadata inconsistencies early, enhancing search effectiveness and overall data quality.

Patently's AI-Driven Metadata Standardization Features

Patently harnesses the power of AI to tackle the challenges of metadata standardization head-on. By using Vector AI-powered semantic search, the platform enhances search accuracy through contextual understanding, moving beyond the limitations of traditional keyword searches. This is especially helpful in navigating technical terminology, which often complicates discovery. Patently also integrates external data sources and employs robust error-checking mechanisms to unify metadata seamlessly.

Another standout feature is the Forward and Backward citation browser. This tool simplifies navigation through patent families and citation networks by organizing data at both the family and asset levels. It ensures that connections between related patents remain intact, even when different jurisdictions use varying classification systems or naming conventions. These capabilities form the foundation for Patently's specialized tools, which are tailored to meet a variety of user needs.

Feature Comparison Across Patently's Plans

Patently offers a range of subscription plans, each designed to cater to different levels of metadata management requirements. Here's a breakdown of the features included in each plan:

Plan | Price | Semantic Search | Citation Browser | External Data Integration | Team Collaboration | AI Drafting |

|---|---|---|---|---|---|---|

Free | Free | ✗ | ✗ | ✗ | ✗ | ✗ |

Starter | $125/mo/user | ✓ | ✓ | ✓ | ✓ (up to 10 users) | ✗ |

Business+ | Custom Pricing | ✓ | ✓ | ✓ | ✓ (unlimited users) | ✓ |

The Free plan is perfect for occasional users, offering basic patent search functions, filters, and family browsing.

The Starter plan is tailored for small teams working on cross-jurisdictional patents. It includes core tools like semantic search, analytics, and the Forward and Backward citation browser.

For larger organizations, the Business+ plan adds advanced features like AI-assisted patent drafting tools and custom fields, enabling teams to align metadata workflows with specific organizational needs.

Each plan ensures that users can access tools that match their scale and complexity, making metadata management both efficient and scalable.

Conclusion

AI has reshaped the way patent professionals manage metadata standardization, turning a once tedious and costly manual process into a streamlined, automated workflow. Studies show that AI-powered standardization not only enhances efficiency but also reduces the chance of missing patents, leading to more thorough prior art searches.

For those in the patent field, this means less time spent cleaning up data and more time dedicated to strategic decision-making. By aligning metadata with FAIR principles - making it Findable, Accessible, Interoperable, and Reusable - AI ensures that patent data is usable across jurisdictions and languages. This advancement improves search accuracy, simplifies the management of cross-jurisdictional patent families, and eases the handling of multilingual data, significantly lightening the cognitive load for professionals. Tools like Patently leverage these advancements, offering features like advanced semantic search and citation navigation to optimize workflows.

Platforms such as Patently bring these innovations to life. By integrating Vector AI-driven semantic search with tools like Forward and Backward citation browsing, patent professionals gain deeper, context-rich insights that extend beyond basic keyword searches. Automated classification and predictive analytics further enhance the user experience, ensuring that even complex technical language and diverse classification systems are no longer obstacles to discovery.

The role of AI in patent metadata management isn’t about replacing human expertise - it’s about enhancing it. With AI taking care of metadata standardization, professionals can focus on strategic analysis, uncovering opportunities, and delivering meaningful value to their organizations and clients.

FAQs

What patent metadata fields can AI standardize automatically?

AI has the ability to automatically organize and standardize patent metadata fields, including labels, content, media assets, and other descriptive details. This helps maintain consistency and ensures everything aligns with established industry standards.

How does semantic search find patents without exact keywords?

Semantic search finds patents by understanding the meaning of the text, not just matching exact keywords. Using natural language processing (NLP), it evaluates how concepts relate to each other. This approach delivers more accurate results by focusing on context rather than relying solely on specific word matches.

When should humans review AI-generated classifications?

When accuracy, consistency, or legal standards are critical, humans should step in to review AI-generated classifications. This becomes particularly important in fields like patent law or when dealing with ambiguous or complex results. For instance, human oversight is essential to ensure compliance with legal requirements or to interpret unclear AI outputs effectively.