Natural Language Processing for Patent Retrieval

Intellectual Property Management

May 14, 2026

NLP-driven semantic search improves patent retrieval, handles complex claims and multilingual prior art fast.

Patent searches are changing, thanks to Natural Language Processing (NLP). Here's what you need to know:

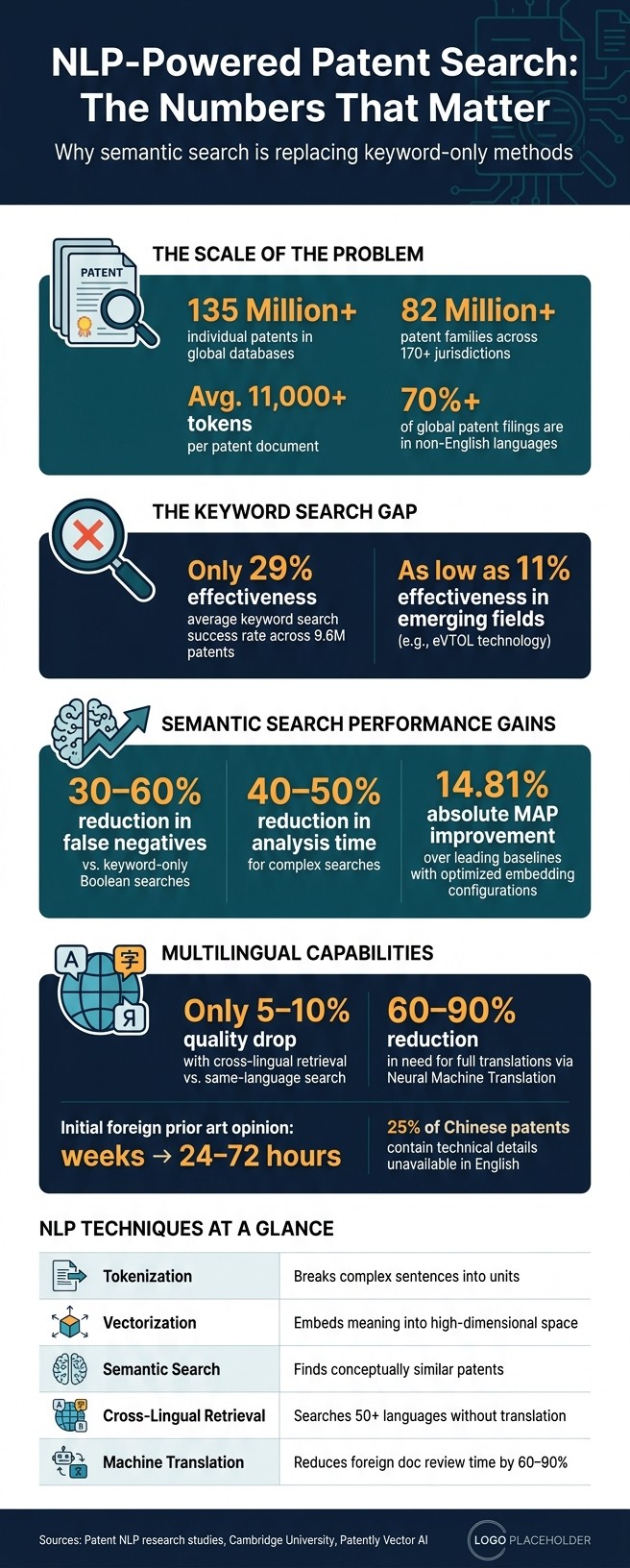

Why it matters: Patents are complex, with over 11,000 tokens on average. Traditional keyword searches often miss relevant results due to vocabulary mismatches and document structure.

How NLP helps: Using transformer-based models, NLP enables semantic search, which focuses on meaning instead of exact words. This approach reduces false negatives by 30–60% and saves time.

Key features: NLP tools like Patently's Vector AI use vectorization, semantic search, and cross-lingual capabilities to handle large, multilingual patent databases. They also integrate into drafting workflows for real-time prior art retrieval.

Challenges solved: NLP addresses issues like claim structure analysis, antecedent references, and multilingual searches, making global patent retrieval faster and more accurate.

NLP is transforming how patents are searched, analyzed, and drafted by focusing on context and meaning rather than just keywords. Let’s explore how this works.

NLP vs. Keyword Patent Search: Key Stats & Performance Gains

How NLP Handles Patent Text

Structure and Language of Patent Texts

Patents are legal documents with a strict format, including sections like the title, abstract, background, detailed description, and claims. Each section serves a specific purpose, so NLP systems must process them individually rather than treating the document as a single, uniform text.

The claims section is particularly challenging. Claims are structured as long, intricate sentences divided into three parts: preamble, transitional phrase, and limitation body. The transitional words in this section aren't just grammatical - they carry legal weight, defining the scope of the patent's protection. For NLP systems to interpret these claims correctly, they must be trained to identify and understand these "signal words."

Another complexity lies in the use of the "lexicographer rule", where inventors redefine common words or create entirely new terms for their inventions. For instance, a patent might redefine "fluid" to include solids under specific conditions. General-purpose language models, trained on everyday language, often misinterpret these custom definitions. This highlights the importance of training NLP models on patent-specific datasets.

Additionally, resolving antecedent basis - tracking references within the text - is crucial. For example, a patent might introduce "a motor" and later refer to it as "the motor." Misinterpreting these references can lead to errors in searches and analyses. Standard NLP models frequently struggle with this, underscoring the need for specialized techniques.

These unique structural and linguistic features require tailored NLP approaches.

Core NLP Techniques for Patent Text Analysis

To address these challenges, NLP employs several techniques for analyzing patent text. Tokenization breaks down complex sentences into manageable units, while stemming reduces words to their base forms (e.g., "driving", "driven", and "drives" all map to "drive"). This helps the system identify variations of a concept without listing every possible form.

Vectorization is another key tool, embedding text into high-dimensional vectors that capture meaning and context. Transformers enhance this process by mapping patent text into vectors that reflect not just word usage but also underlying ideas. This ensures that patents describing the same invention in different terminology align closely in semantic space. Algorithms like Cosine Similarity and K-Nearest Neighbors (KNN) measure these relationships, while Approximate Nearest Neighbor (ANN) algorithms enable rapid searches across massive databases, delivering results in milliseconds.

Semantic search technology has proven effective in reducing false negatives during prior art searches, cutting them by 30% to 60% compared to keyword-only methods. This is critical because overlooking a relevant reference can jeopardize the validity of a patent. The advent of long-context models like GPT-4 and Llama-3.1, capable of handling up to 128,000 tokens, is also significant. These models can process the entirety of a patent's text, which often exceeds 11,000 tokens - something earlier models couldn't handle.

"The language of modern patents has substantially evolved and diverged from normal technical writing... [they] contain deceptive elements added for increasing the scope or camouflage important aspects." - Lekang Jiang and Stephan Goetz, University of Cambridge

Together, these techniques form the backbone of efficient and accurate patent search and analysis workflows.

Multilingual and Cross-Jurisdictional Challenges

Patents are filed across over 170 jurisdictions, covering more than 82 million patent families and 135 million individual patents. This global scope means NLP must handle documents in multiple languages, often for the same invention.

Cross-Lingual Information Retrieval (CLIR) helps by mapping search queries to equivalent technical terms in other languages. For example, a search in English can uncover relevant patents filed in Japanese, German, or Chinese without requiring separate searches in each language. Neural Machine Translation (NMT) further streamlines this process by triaging foreign-language documents. Instead of translating entire texts, NMT identifies promising candidates for human review, reducing the need for full translations by 60% to 90%. This can cut the time for an initial opinion on foreign prior art from weeks to just 24–72 hours.

However, machine translation in patent contexts carries legal risks. A single mistranslation could invalidate a patent, making it unsuitable for formal filings or litigation. Machine translation is best suited for discovery and triage, with human verification remaining essential for legal accuracy. Combining text-based searches with non-textual signals, such as CPC/IPC classifications and chemical sequences, provides additional safeguards against translation-related errors.

NLP Applications in Patent Retrieval

Semantic Search for Prior Art

Traditional keyword searches often fall short because they only return exact matches. For instance, searching for "autonomous vehicle" won't uncover patents that describe the same technology but use terms like "self-driving car." NLP tackles this issue by focusing on meaning rather than just words.

With semantic search, your query is transformed into a mathematical vector. The system then identifies patents with vectors that closely align with yours in a multi-dimensional space. This means conceptually similar patents are retrieved even when terminology differs. Missing even one relevant document can jeopardize patent validity.

The data paints a clear picture. A study of 9.6 million patents revealed that keyword-based searches had an average effectiveness of only 29% in finding relevant documents. In emerging fields like eVTOL technology, this figure dropped to a mere 11%. Semantic search significantly improves outcomes, reducing false negatives by 30% to 60% compared to Boolean-only searches.

For optimal results, queries should be detailed, including the problem, technical approach, and alternatives. This helps the model better understand the invention's scope.

While semantic search enhances overall document retrieval, a similar level of precision is crucial when analyzing individual patent claims.

Claim-Based Search and Analysis

NLP also shines in analyzing the complex structure of patent claims. Unlike keyword tools that treat patents as flat text, NLP systems can break down claim hierarchies, distinguishing preambles from limitations and identifying how dependent claims refine the scope.

This capability is particularly valuable for tasks like freedom-to-operate (FTO) analysis, where determining whether a product or process infringes on an existing patent's claims is critical. NLP tools can match your query against specific claim limitations instead of the entire document, ensuring only the truly relevant claims are highlighted. Traditional search methods simply can't achieve this level of precision.

Combining Semantic Search, Keywords, and Classification Filters

The most effective patent retrieval strategies combine multiple search techniques. Semantic search, keyword queries, and classification filters like CPC or IPC codes work together to improve accuracy.

Classification codes serve as a foundation. They keep your search focused on the right technical domain, while semantic search handles variations in language. Keywords, meanwhile, are helpful for capturing specific technical terms or product names that need to appear exactly as written. Here's how this layered approach looks in practice: begin with a CPC code to define the technology area, use a semantic query to find conceptual matches, and then apply keyword filters to catch any domain-specific terminology the semantic model might overlook.

Patently's Vector AI integrates this approach seamlessly. It allows patent professionals to run semantic searches while using classification and date filters in the same workflow - without needing to switch tools. Applying a priority date filter early on is especially useful, as it ensures results only include documents that legally qualify as prior art before deeper analysis begins.

Multilingual Patent Search and Translation with NLP

Cross-Lingual Semantic Retrieval

Patent innovation knows no borders - prior art can be documented in any language. A groundbreaking invention might be filed in a Japanese patent, detailed in a German application, or outlined in a Korean utility model. Unfortunately, a keyword search in English won't uncover these.

This is where cross-lingual semantic retrieval steps in. Using models like paraphrase-multilingual-MiniLM-L12-v2, trained on parallel sentence pairs in over 50 languages (including non-Latin scripts), it aligns concepts across different jurisdictions. For example, when you submit a query in English, the system compares the query's vector directly with vectors from patents in Chinese, Arabic, Korean, and many other languages - without needing a translation step.

Interestingly, this approach results in only a 5–10% quality drop compared to same-language searches, while cutting out the costs and delays of translation APIs. For most searches, this trade-off is worth it. However, for critical tasks, combining techniques works best: cross-lingual embeddings cast a wide net to ensure nothing is overlooked, while translation-based re-ranking hones in on the most relevant results. Once the key documents are identified, precise translation becomes crucial.

Machine Translation for Patent Texts

After identifying relevant patents in foreign languages, the next hurdle is making them understandable through accurate translation. Here, general-purpose translation tools fall short. Patent language is notoriously complex, filled with legal jargon ("patentese"), specialized terms, and newly coined words that aren't in standard dictionaries. On top of that, structural differences between languages can lead to misinterpretations. For instance, while English follows a subject-verb-object (SVO) order, Japanese uses subject-object-verb (SOV), which can confuse translation systems.

This is where domain-specific machine translation models shine. Trained on patent-specific datasets, these models are better equipped to handle the nuances of patent claims, ensuring technical and legal accuracy. They understand how terms are defined within the context of a claim and how legal language translates across jurisdictions, making them far superior to generic tools.

Combining Multilingual Search and Translation in Patent Workflows

Bringing together cross-lingual retrieval and domain-specific translation creates a smooth and efficient patent search process. The semantic search layer identifies relevant foreign-language patents without requiring upfront translation. Once potential matches are found, machine translation makes those documents readable and actionable.

This combination is especially crucial for tasks like freedom-to-operate analysis and international filings, where missing a single patent could lead to legal complications. Platforms like Patently's Vector AI simplify this process by enabling patent professionals to perform global searches across languages and jurisdictions with a single query - no need to juggle multiple tools or language-specific indexes. As Andrew Crothers, Creative Director at Patently, explains:

"With Elastic, it's like having a patent attorney with decades of experience guiding every search."

One standout benefit? Embedding configurations have shown a 14.81% absolute Mean Average Precision (MAP) improvement over leading baselines in patent retrieval. This highlights just how critical the right model architecture is when navigating a global patent database.

Putting NLP-Driven Patent Retrieval into Practice

Key Components of an NLP-Based Patent Search System

An NLP-based patent search system isn't just one tool; it's a series of interconnected steps, each serving a specific purpose. It begins with data ingestion, where systems update in real time to ensure patent professionals always have access to the latest filings. Next comes preprocessing, where raw patent text is cleaned, tokenized, and structured to make it ready for analysis.

Handling lengthy documents is another critical aspect. Patent descriptions often exceed 11,000 tokens. Advanced models like GPT-4 and Llama-3.1, with their extended context windows of up to 128,000 tokens, can process these documents in full. This allows them to capture intricate details, such as specific embodiments and technical disclosures, that shorter-context models might overlook.

Finally, the retrieval layer uses vector indexing to connect queries with semantically similar documents. The success of this step depends on the embedding model and how the index is configured. Together, these components integrate seamlessly into established patent workflows.

Fitting NLP Tools into Patent Workflows

NLP tools, including the top patent tools for IP professionals, can be strategically woven into patent workflows at critical stages. For instance, during prior art searches, how you phrase your query matters. Describing the invention in full sentences - as if explaining it to a technical expert - helps the model retrieve conceptually relevant results instead of simply matching keywords.

When drafting and analyzing patents, NLP tools can assist by identifying unclaimed subject matter, suggesting additional keywords, or broadening search coverage. A great example is Patently’s AI drafting assistant, Onardo, which drafts patent specifications while simultaneously searching for prior art.

Here’s a quick look at how specific NLP techniques align with practical tasks in patent workflows:

NLP Technique | Application in Patent Workflow |

|---|---|

Named Entity Recognition | Identifies inventors, companies, and specific technologies |

Topic Modeling (LDA) | Groups related patents and uncovers technological trends |

Natural Language Generation | Automates drafting of patent specifications and summaries |

Machine Translation | Translates foreign patent documents with improved contextual accuracy |

"This powerful addition has positioned Patently as one of the most innovative platforms for semantic patent search and is core to our technology stack." - Jerome Spaargaren, Founder and Director, Patently

Integrating these tools effectively can boost both the efficiency and accuracy of patent-related tasks.

Quality Control and Risk Management in NLP-Assisted Work

While NLP tools bring immense potential, they must be paired with strong quality controls and risk management - especially in legal contexts, where errors can have serious consequences. Three key practices can help ensure reliability:

Explainability: The system should highlight the specific text or citations it used to generate outputs. This allows attorneys to verify the AI's reasoning against the original source.

Controlled Outputs: Setting the LLM's temperature to zero ensures consistent, factual responses, avoiding creative variations.

Strict Prompting: Clear instructions, like having the model respond with "Not disclosed in this patent" when information is absent, can prevent inaccuracies or hallucinations.

Data security is just as critical. Pre-filing patent documents often contain sensitive intellectual property, so any NLP tool used at this stage must include strong encryption and have clear policies about how submitted text is handled - particularly regarding model training.

"AI-related patents are crucial to securing ownership over discoveries and inventions." - Laurence Brown, Patently

While AI can narrow down options and map evidence, expert legal judgment remains essential for evaluating novelty and obviousness.

NLP: Improving Search Results with Semantic Search

Conclusion

Conducting a patent search can feel like navigating a maze. The language is dense, the number of documents overwhelming, and the added complexity of patents filed in various languages across jurisdictions only adds to the challenge. But NLP is reshaping how we approach this process. By focusing on the meaning of text rather than just matching keywords, NLP uncovers relevant prior art that traditional Boolean searches often overlook. In fact, semantic search has been shown to reduce false negatives by 30–60% compared to keyword-only methods, while also cutting analysis time by 40–50% for complex searches. These tools are not only improving local searches but are proving invaluable for global patent retrieval efforts.

The global aspect is crucial. More than 70% of global patent filings are in non-English languages, and about 25% of Chinese patents include technical details that can't be found in English. Cross-lingual semantic retrieval bridges this gap. It allows professionals to search foreign-language databases using plain English descriptions, eliminating the need for manual translations.

Platforms like Patently are already applying these advancements. Using its Vector AI, Patently transforms patent text into high-dimensional vectors, enabling conceptual matches across millions of documents. For example, a recent search powered by Patently's Vector AI completed in minutes what would traditionally take hours. This kind of efficiency is a game-changer for patent professionals.

FAQs

How is semantic patent search different from keyword search?

Semantic patent search takes patent retrieval to the next level by focusing on the meaning and context of patent content, rather than just matching exact words. Powered by Natural Language Processing (NLP) and AI, it goes beyond traditional keyword searches, which can often overlook relevant patents due to variations in terminology or the use of synonyms. Instead, semantic search identifies inventions that are conceptually similar, even if they’re described differently or in another language. This approach improves recall, saves time, and increases accuracy, making the patent search process far more efficient and effective.

Do I still need CPC/IPC codes if I use NLP search?

CPC (Cooperative Patent Classification) and IPC (International Patent Classification) codes play a key role in patent research, even with advancements in NLP (Natural Language Processing) technology. These codes offer a structured way to classify patents into specific technical categories.

By organizing patents systematically, CPC and IPC codes improve both search precision and coverage. This means researchers can uncover more relevant patents while ensuring they don’t miss critical results. In short, these classification systems complement NLP tools, making searches more effective and thorough.

How reliable is machine translation for foreign-language prior art?

Machine translation has made strides thanks to AI, making it easier to search foreign-language prior art and break down language barriers. However, it’s not perfect. When it comes to patent examination or litigation, machine translations often fall short in delivering the level of legal or technical precision required. For critical situations where accuracy is key, combining machine translation with expert review or certified translations is the safest way to avoid misinterpretation or overlooking important prior art.